18/04/2002

The modern automobile is rapidly transforming from a mere mode of transport into a sophisticated digital hub. At the heart of this evolution lies the integration of advanced technology, particularly in-car digital voice assistants. These systems are revolutionising how drivers interact with their vehicles, promising enhanced safety, convenience, and a more intuitive user experience. Gone are the days of fumbling with buttons and dials; now, a simple voice command can unlock a wealth of functionalities, making every journey smoother and more connected.

- Understanding the In-Car Digital Voice Command Recognition System

- The Intelligent Mechanics Behind Voice Recognition

- The Foundation: Android Automotive and Car Service

- Benefits of In-Car Digital Voice Assistants

- Comparing Interaction Methods: Buttons vs. Voice

- The Road Ahead: Future Enhancements in In-Car Voice Technology

- Frequently Asked Questions About In-Car Voice Systems

- Q1: Are in-car voice command systems safe to use while driving?

- Q2: Can these systems understand different accents or dialects?

- Q3: Do I need an internet connection for the voice commands to work?

- Q4: Can I customise the voice commands or add new ones?

- Q5: How do these systems handle background noise in the car?

Understanding the In-Car Digital Voice Command Recognition System

An in-car digital voice command recognition system is an artificial intelligence (AI)-driven assistant designed to interpret spoken commands from vehicle occupants and translate them into actionable instructions for the car's various systems. This technology leverages machine learning (ML) and natural language processing (NLP) to understand human speech, distinguish specific commands, and execute them, all within the dynamic environment of a moving vehicle.

Key Features Enhancing Your Driving Experience

Modern voice command systems are built with several core functionalities to ensure a robust and user-friendly experience:

- Speech Recognition: This is the foundational element, capturing audio input from a microphone and converting spoken words into text. Advanced models, such as the open-source Vosk deep learning model, are often employed for their accuracy, offline capabilities, and multilingual support. The ability to accurately transcribe spoken input, even amidst road noise or conversations, is paramount for the system's effectiveness.

- Command Classification: Once spoken input is converted to text, machine learning models classify it into predefined categories. This allows the system to precisely recognise commands like 'open doors', 'lower windows', 'connect Bluetooth', or 'control steering wheel'. The accuracy of this classification determines how reliably the system responds to user requests.

- Unknown Command Handling: Not every spoken phrase will be a clear, predefined command. A sophisticated system includes mechanisms to handle ambiguous or unrecognised inputs by assigning them an 'Unknown' label. This prevents misinterpretations and ensures the system remains robust and adaptable, providing feedback to the user when a command isn't understood.

The Intelligent Mechanics Behind Voice Recognition

The development of a reliable in-car voice command system involves a meticulous methodology, combining various computational techniques to achieve seamless interaction. Here's a deeper look into the project components that bring these systems to life:

Speech Recognition: Transforming Sound into Data

The first crucial step is converting raw audio into a usable text format. This is where advanced speech recognition models come into play. The Vosk model, for instance, is highly regarded for its ability to perform accurate transcription of spoken user input into text. Its key advantages include its offline functionality, which means it doesn't always require an internet connection, high accuracy even in challenging acoustic environments, and support for multiple languages. Furthermore, Vosk's ease of customisation allows developers to tailor it for specific in-vehicle vocabularies and accents, enhancing its performance for automotive applications.

Data Preprocessing: Refining the Input

Once speech is transcribed into text, it undergoes a rigorous preprocessing phase. This is vital for standardising the data and removing extraneous information that could hinder the machine learning model's performance. Common preprocessing techniques include:

- Lowercasing: Converting all text to lowercase ensures consistency, treating 'Open' and 'open' as the same word.

- Tokenisation: Breaking down sentences into individual words or phrases (tokens) for easier analysis.

- Lemmatisation: Reducing words to their base or dictionary form (e.g., 'running' becomes 'run', 'cars' becomes 'car'). This helps in reducing the dimensionality of the data and improves the model's ability to generalise.

- Removal of Numbers, Contractions, Empty Strings, and Stop Words: Eliminating irrelevant elements like numbers, contracted forms (e.g., 'don't' to 'do not'), empty spaces, and common words (e.g., 'the', 'a', 'is') that carry little semantic meaning helps to filter noise, reduce data sparsity, and focus on the most important keywords.

These techniques collectively enhance the semantic content extracted from the text, providing clean, uniform data for the subsequent classification phase.

Data Augmentation: Expanding the Training Horizon

Real-world datasets for in-car commands can sometimes be limited. To address this, data augmentation techniques are employed. These methods artificially expand the training dataset by creating new, varied examples from existing ones. Techniques like random swapping (randomly swapping words within a sentence) and synonym replacement (replacing words with their synonyms) help to increase the diversity of the training set. This not only provides more data for the models to learn from but also significantly mitigates the risk of overfitting, where a model performs well on training data but poorly on unseen data.

Model Training: Learning the Patterns

With clean and augmented data, the next step is to train machine learning models to recognise patterns associated with each command. Various models can be considered for this task, including:

- Multi-Layer Perceptron (MLP): A type of artificial neural network capable of learning complex non-linear relationships.

- Logistic Regression: A statistical model used for binary classification, but extendable for multi-class problems.

- RandomForest: An ensemble learning method that constructs a multitude of decision trees at training time and outputs the class that is the mode of the classes (classification) or mean prediction (regression) of the individual trees.

- GradientBoosting: Another ensemble method that builds a strong prediction model from a series of weak prediction models.

- Support Vector Machine (SVM): Often chosen for its effectiveness in high-dimensional spaces, SVM works by finding the optimal hyperplane that best separates different classes of data. For in-car voice command recognition, SVM has proven to be a highly effective model for classifying input text into predefined command categories.

The best model is selected by comparing classification results across these different algorithms, ensuring the most accurate and reliable command recognition.

Real-Time Recognition: Instantaneous Response

The final piece of the puzzle is enabling the system to capture and process audio in real-time. This means that as soon as a user speaks a command, the system must transcribe, preprocess, and predict the spoken command using the trained models almost instantaneously. This real-time capability is crucial for providing a seamless and responsive user experience, ensuring that commands are executed without noticeable delay.

The Foundation: Android Automotive and Car Service

For these sophisticated voice command systems to function seamlessly within a vehicle, they need a robust underlying operating system. This is where Android Automotive comes into play. Android Automotive is an operating system specifically designed to run directly on a vehicle's infotainment system, offering a comprehensive platform for in-car technology.

Car Service and the Vehicle Hardware Abstraction Layer (HAL)

At the core of Android Automotive's ability to interact with vehicle hardware is the Car Service and the Vehicle Hardware Abstraction Layer (HAL). The HAL serves as a crucial bridge between the vehicle's physical hardware components (like windows, doors, steering, etc.) and the software framework. Its primary role is to implement communication plugins that collect specific vehicle data and map them to predefined Vehicle property types. These property types are standardised within a file called types.hal, ensuring a consistent interface for the Google framework to recognise and interact with vehicle functions. This flexible architecture allows the HAL to be customised, accommodating the unique specifications of different vehicle models and enabling voice commands to directly control hardware actions.

In essence, when you tell your car, "Open the window," the voice command system processes your speech, classifies it as a 'window open' command, and then communicates this instruction via the Car Service and Vehicle HAL to the actual window mechanism, completing the action.

Benefits of In-Car Digital Voice Assistants

The integration of digital voice assistants in vehicles offers significant advantages:

- Enhanced Safety: Drivers can keep their hands on the wheel and eyes on the road, reducing distractions associated with manual control of infotainment or vehicle functions.

- Increased Convenience: Performing tasks like navigation input, climate control adjustments, or music selection becomes effortless and intuitive.

- Accessibility: Voice commands can greatly assist drivers with physical limitations, making vehicle controls more accessible.

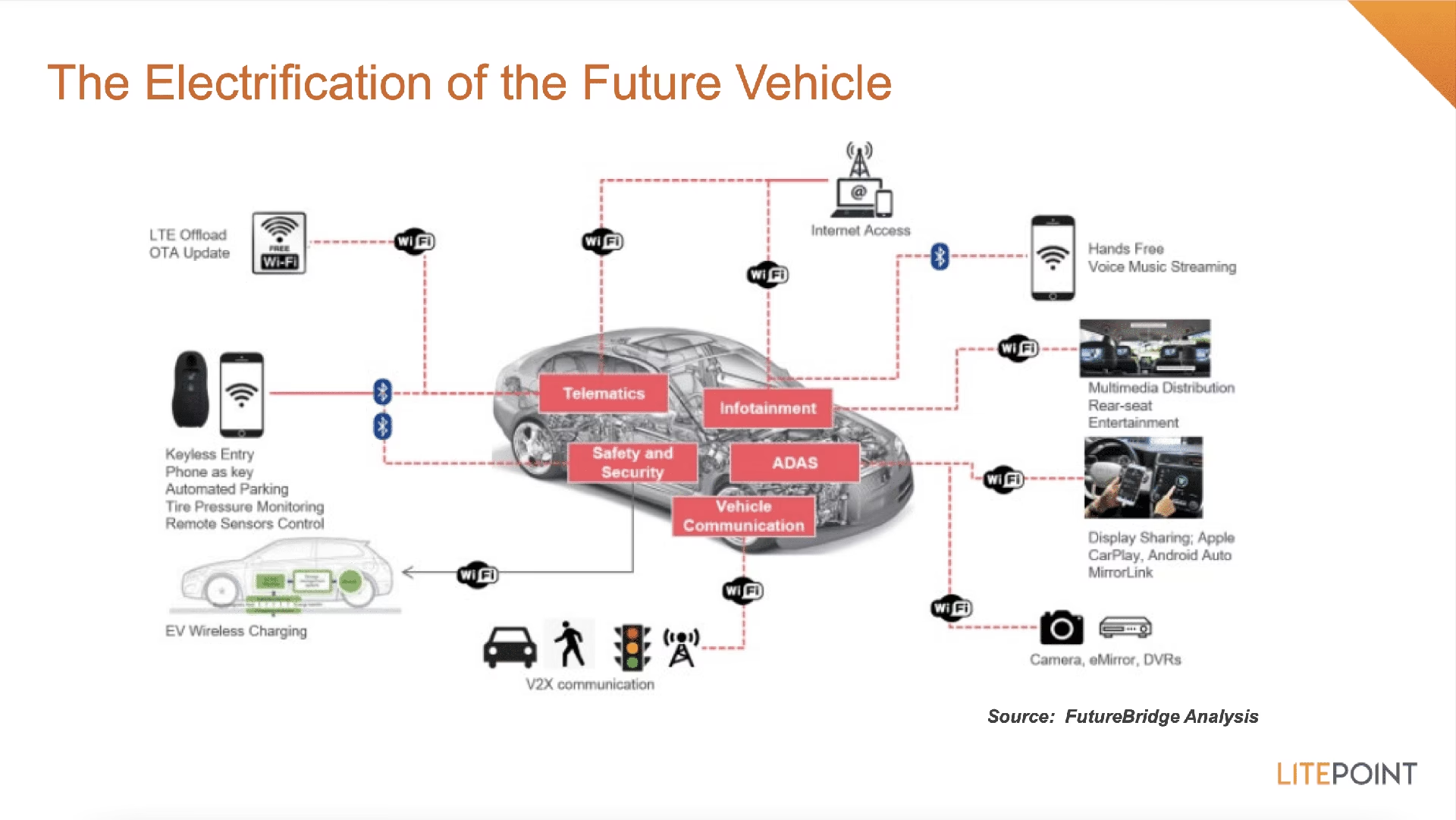

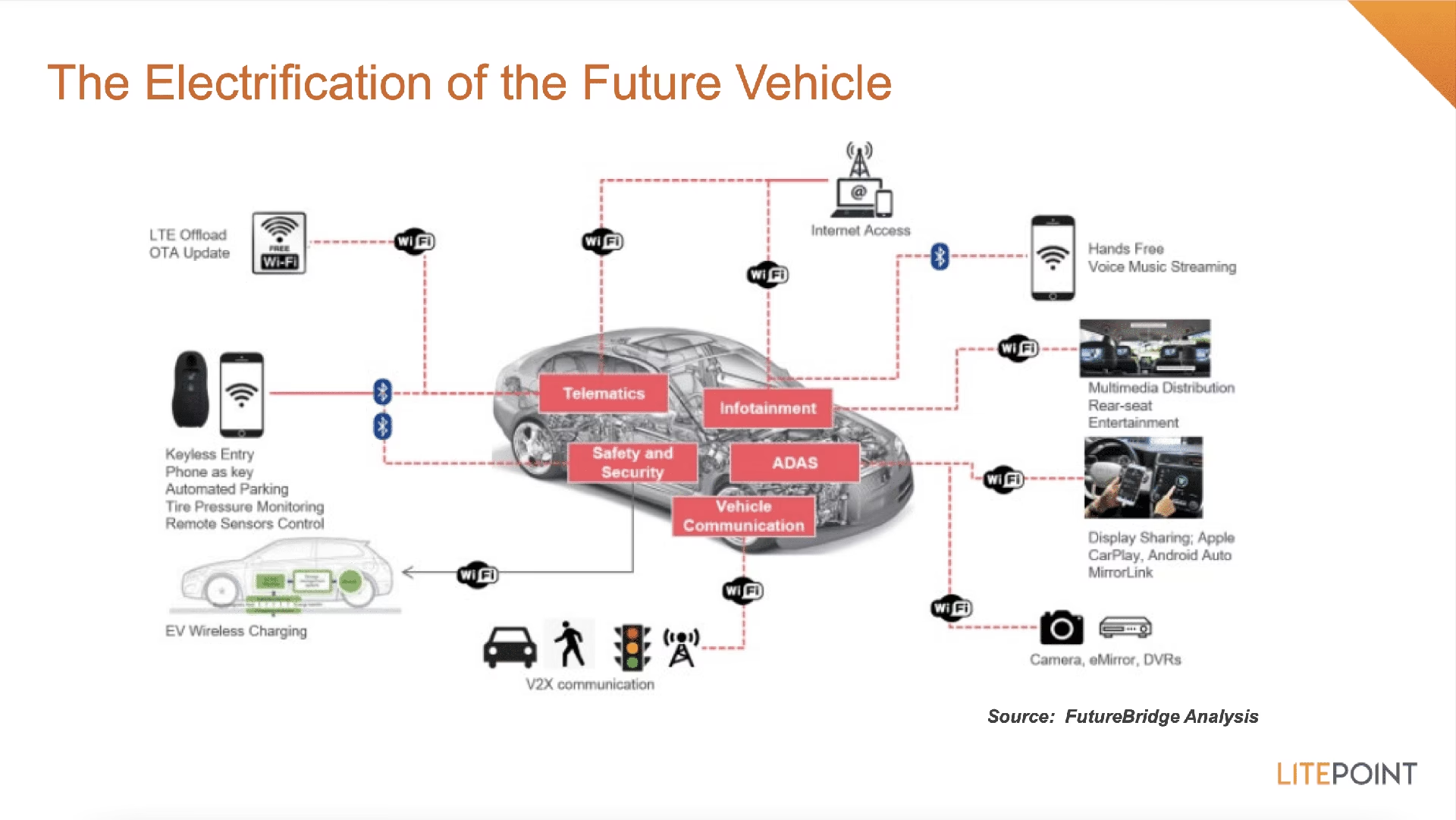

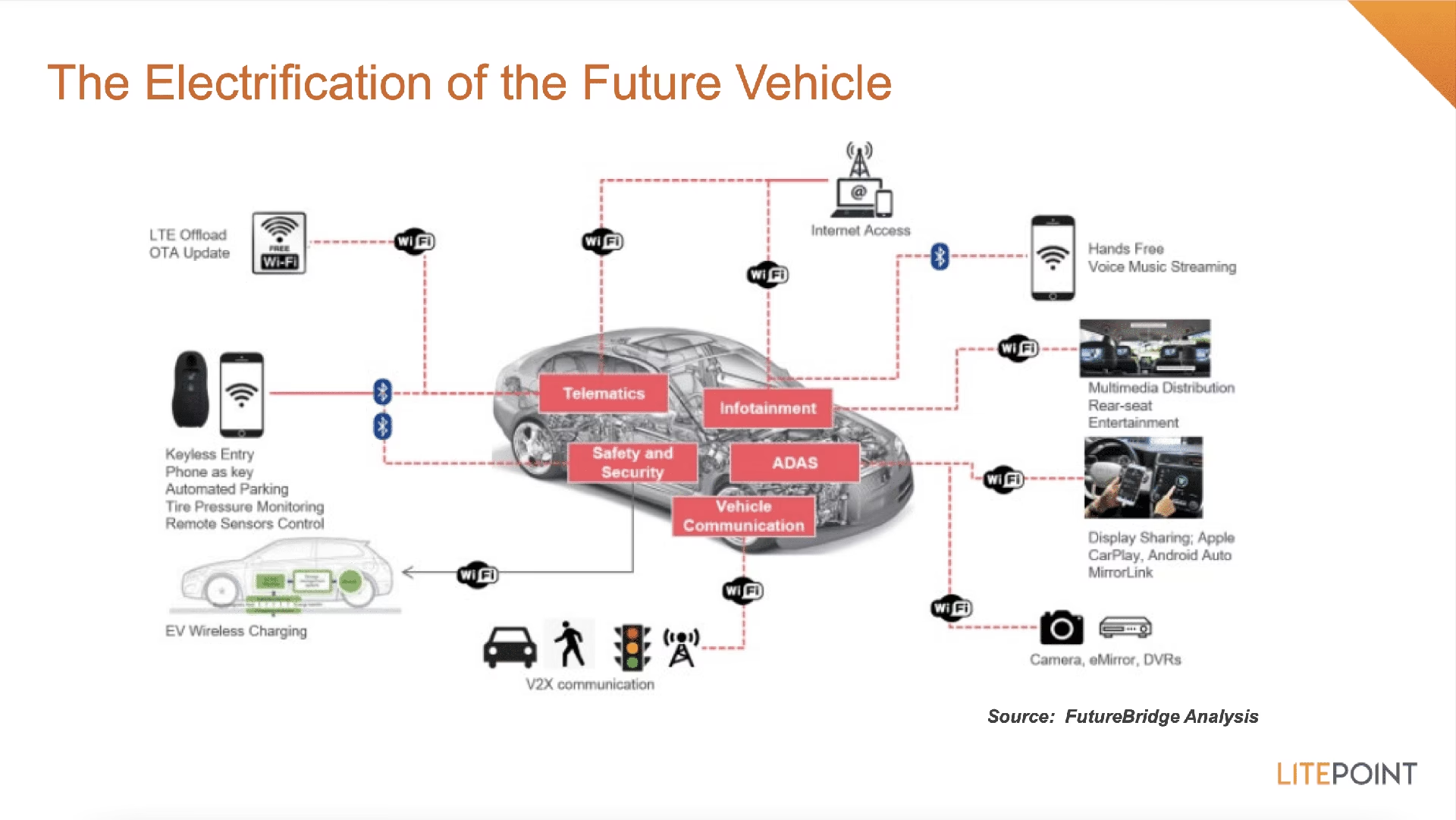

- Future-Proofing: As vehicles become more autonomous and connected, voice interfaces will play an even more critical role in managing complex systems and interacting with smart infrastructure.

While traditional buttons and touchscreens have long been the standard, voice commands offer a distinct set of advantages, particularly in a driving context.

| Feature | Traditional Buttons/Touchscreen | Digital Voice Commands |

|---|---|---|

| Distraction Level | Requires visual attention and hand movement, increasing distraction. | Allows hands-free, eyes-on-road operation, significantly reducing distraction. |

| Ease of Use | Can be complex with multiple menus; requires learning button layout. | Intuitive and natural; simply speak your command. |

| Speed of Execution | Can be slow for multi-step tasks. | Often faster for complex commands or navigating deep menus. |

| Accessibility | May be challenging for individuals with motor impairments. | Highly accessible, enabling control for a wider range of users. |

| Learning Curve | Requires familiarisation with system layout. | Minimal, as it mimics natural conversation. |

| Environmental Factors | Unaffected by noise; can be difficult with gloves. | Can be affected by background noise; unaffected by physical barriers like gloves. |

The Road Ahead: Future Enhancements in In-Car Voice Technology

The field of in-car digital voice assistants is continuously evolving, with researchers and developers pushing the boundaries of what's possible. Future work aims to make these systems even more intuitive, comprehensive, and adaptive.

Expanding the Command Set

Current systems handle a predefined set of commands, but future iterations will aim to expand this range significantly. This means covering a wider spectrum of user interactions, from highly specific vehicle diagnostics requests to more nuanced control over climate zones, entertainment preferences, and even predictive maintenance alerts. The goal is to move towards a truly conversational interface where drivers can speak naturally without needing to memorise specific phrases.

Multilingual Support

As the automotive market is global, extending projects to handle commands and instructions in multiple languages is a crucial next step. This requires not only translating and preprocessing text in various languages but also ensuring that the underlying AI models maintain their accuracy across different linguistic nuances, accents, and dialects. Achieving robust multilingual support will make these systems accessible to a much broader audience worldwide.

Continuous Learning

Imagine a system that learns from your preferences and habits. Future voice assistants will explore techniques to enable continuous learning from user input. This means the system could adapt to new commands, recognise individual speaking patterns, and even anticipate user needs based on past interactions. Such adaptability would greatly enhance the personalisation and efficiency of the in-car experience.

Collaborative Filtering Techniques

To further personalise the experience, implementing collaborative filtering techniques is on the horizon. This involves learning from the preferences of different users. For example, if multiple drivers of the same car prefer specific climate settings or music genres at certain times, the system could learn these patterns and proactively adjust settings. This collective intelligence would allow the model to adapt more intelligently to diverse user behaviours and preferences.

Utilising Pre-trained Large Language Models (LLMs)

One of the most exciting areas of future development is the exploration of pre-trained Large Language Models (LLMs) for transfer learning. LLMs, such as those powering advanced AI chatbots, have been trained on vast amounts of text data, giving them a profound understanding of language, context, and intent. By leveraging these powerful models, developers can significantly improve the efficiency of text classification models, especially in scenarios characterised by limited labelled data. This means more accurate understanding of complex or nuanced commands, and potentially the ability to engage in more natural, free-form conversations with the vehicle.

Frequently Asked Questions About In-Car Voice Systems

Q1: Are in-car voice command systems safe to use while driving?

Yes, one of the primary benefits of these systems is enhanced safety. By allowing drivers to control various vehicle functions using voice commands, they can keep their hands on the wheel and eyes on the road, significantly reducing visual and manual distractions compared to interacting with physical buttons or touchscreens.

Q2: Can these systems understand different accents or dialects?

Modern voice recognition systems are becoming increasingly sophisticated and are often trained on diverse datasets that include various accents and dialects. While perfect recognition is always a challenge, continuous improvements in machine learning and deep learning models, along with multilingual support efforts, are making them more capable of understanding a wide range of speech patterns.

Q3: Do I need an internet connection for the voice commands to work?

Many advanced in-car voice command systems, especially those using models like Vosk, are designed to function offline. This means that core commands for vehicle control (e.g., opening windows, adjusting climate) can work without an internet connection. However, features requiring external data, such as real-time navigation updates, streaming music, or complex queries, will typically still require connectivity.

Q4: Can I customise the voice commands or add new ones?

Currently, most in-car systems operate with a predefined set of commands. While some systems may allow for minor customisation (e.g., wake words), adding entirely new commands is generally not a user-facing feature. However, future developments in continuous learning and LLM integration aim to introduce greater adaptability and potential for users to teach the system new phrases or preferences.

Q5: How do these systems handle background noise in the car?

In-car voice systems employ sophisticated noise cancellation algorithms and signal processing techniques to filter out background noise like road sounds, air conditioning, or passenger conversations. This helps the system isolate the driver's voice and accurately interpret commands, even in noisy environments.

The journey of in-car digital voice assistants is a testament to the rapid advancements in AI and automotive technology. As these systems become more intelligent, adaptive, and integrated, they promise to make driving not just safer and more convenient, but truly a seamless extension of our digital lives.

If you want to read more articles similar to The Rise of Digital Voice Assistants in Cars, you can visit the Automotive category.