20/08/2010

In the demanding world of vehicle maintenance and road safety, maintaining the highest standards in MOT testing is not merely a recommendation; it's a fundamental requirement. For every MOT tester and Vehicle Testing Station (VTS) manager across the UK, a powerful, yet often underutilised, tool exists to ensure these standards are consistently met and even surpassed: Test Quality Information (TQI). Published monthly by the Driver and Vehicle Standards Agency (DVSA), TQI data provides an invaluable, granular insight into testing performance, allowing Authorised Examiners (AEs) and site managers to proactively monitor, evaluate, and elevate the quality of their MOT services. Regular review of this data is not just good practice; it's a DVSA mandate designed to foster continuous improvement and uphold the integrity of the MOT scheme, ultimately contributing to safer roads for everyone.

Understanding Test Quality Information (TQI)

Test Quality Information, or TQI, is a comprehensive dataset compiled and issued by the DVSA, offering a detailed snapshot of an individual tester's performance and, by extension, the overall testing standards at a VTS. Essentially, it's a digital report card that provides crucial statistics on various aspects of MOT testing. Each month, this data is refreshed, allowing testers and managers to review their specific failure rates across different categories, compare their test durations against national averages, and identify prevalent vehicle failures. This rich repository of information is designed to serve as a diagnostic tool, enabling AEs and site managers to pinpoint any unusual discrepancies or trends that might indicate a deviation from required testing standards. The DVSA's emphasis on TQI underscores its commitment to ensuring consistency and reliability across all MOT tests conducted nationwide. By diligently reviewing TQI, testers are not just fulfilling a regulatory obligation; they are actively participating in a feedback loop that helps them refine their skills and ensure they are consistently meeting the rigorous benchmarks set by the DVSA.

The data within a TQI report is multifaceted, covering elements such as the total number of tests conducted, the average age of vehicles tested, overall failure rates, average test durations, and critically, component failure rates broken down by specific categories. This detailed breakdown allows testers to compare their individual performance against national averages, offering a clear perspective on areas where they might be excelling or, conversely, where additional training or support could be beneficial. For instance, if a tester's failure rate for braking systems is significantly lower than the national average, it might prompt an investigation into whether all defects are being correctly identified and recorded. Conversely, a much higher rate might suggest an overly stringent interpretation of the manual or a specific type of vehicle being tested more frequently at that station. Understanding and actively utilising TQI is therefore paramount for testers committed to adhering to the highest standards and proactively addressing any areas where their performance may fall short of expectations.

Accessing and Interpreting TQI Reports

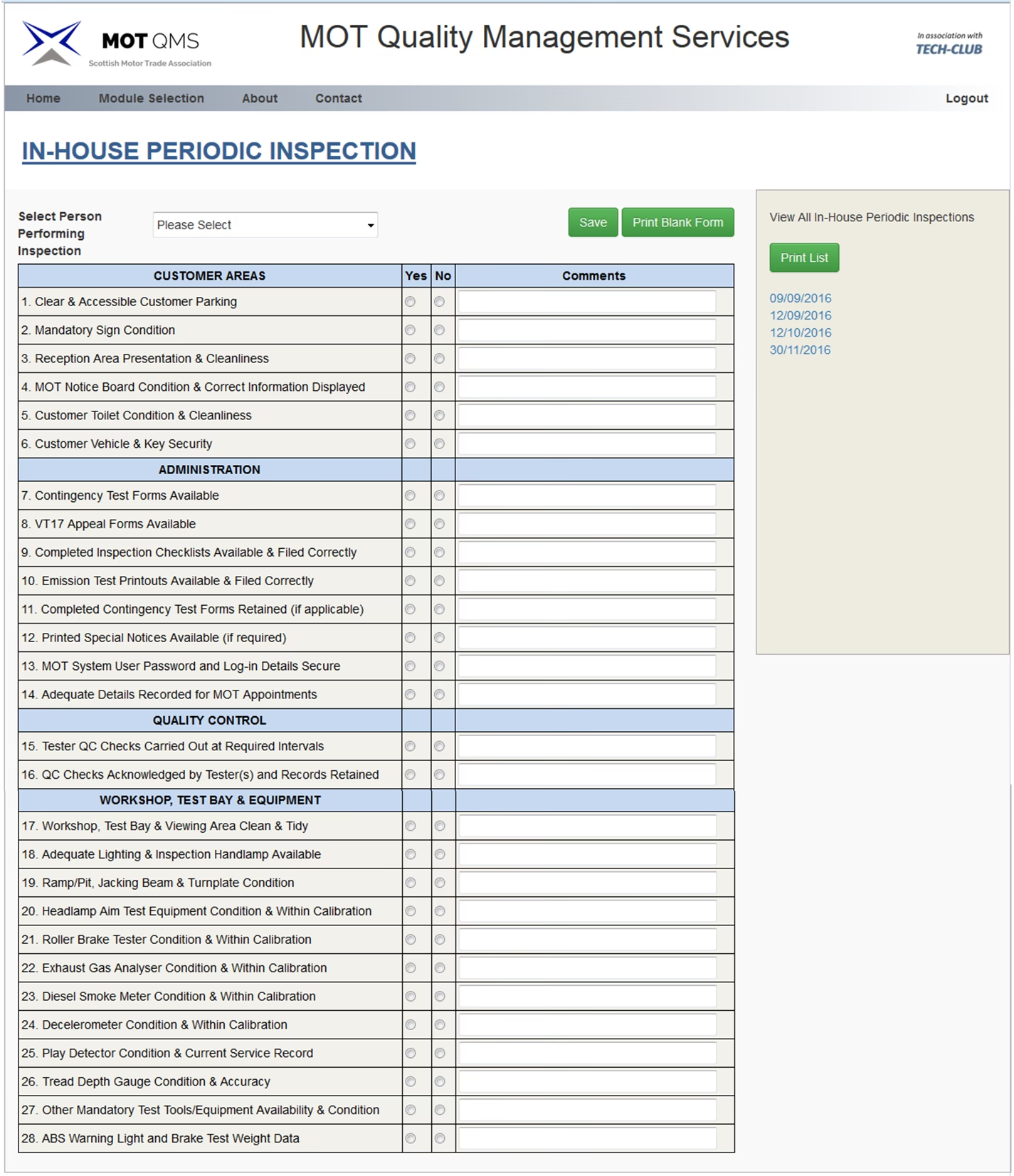

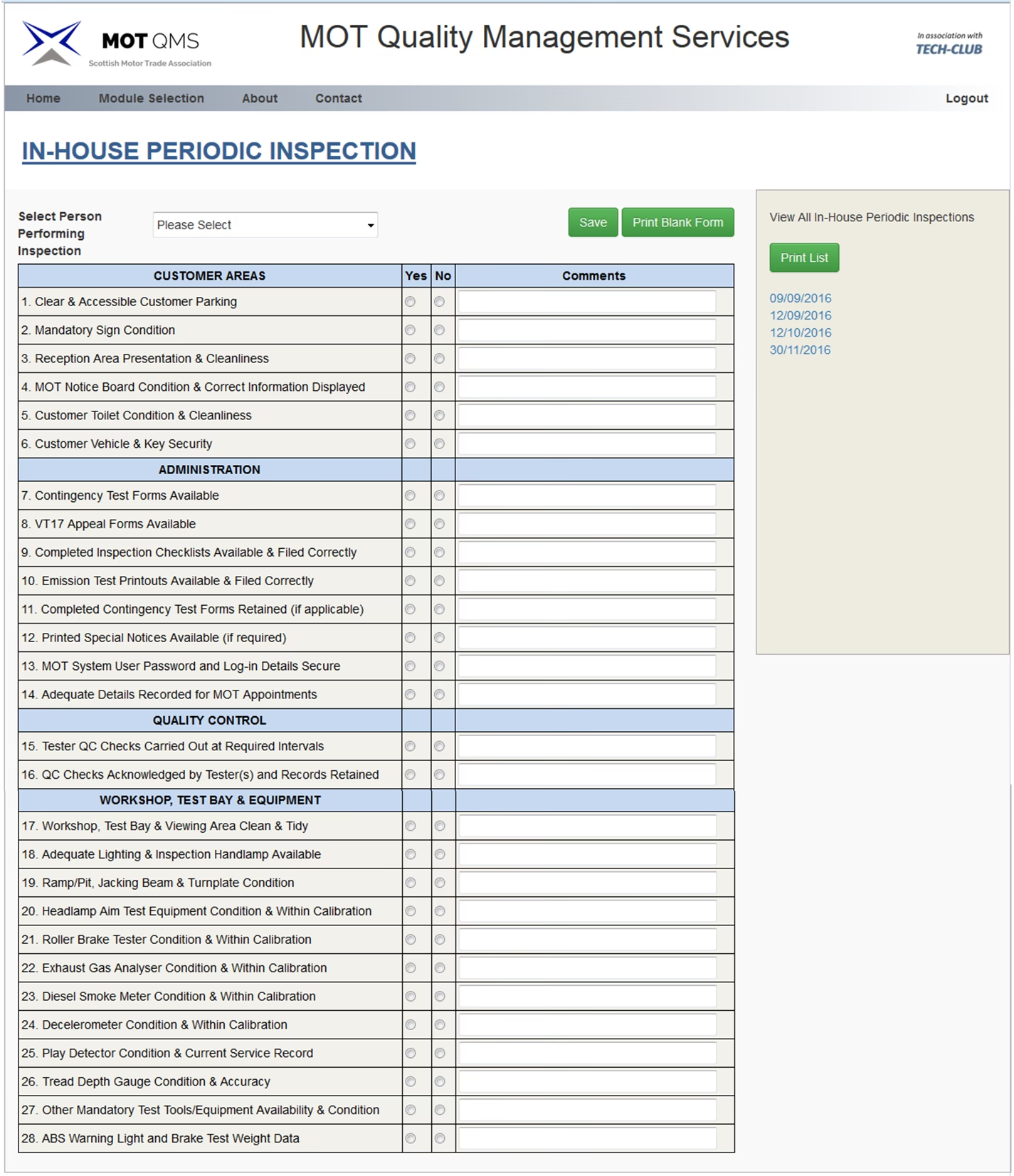

Accessing your TQI reports is a straightforward process, designed to be integrated seamlessly into your daily operations. MOT testers and VTS managers can log into the official MOT testing service portal. Once logged in, navigate to the 'Performance dashboard' section. Within this dashboard, you'll find the option to select 'Test quality information'. From there, you can specify a date range to view the relevant data, allowing you to focus on monthly, quarterly, or even annual performance trends. This flexibility is key to identifying both short-term anomalies and long-term patterns.

The report itself is a treasure trove of statistics. It presents data on overall test results, the average time taken for each test, and a breakdown of the most common vehicle failures observed. Crucially, it also provides specific information on tester failures categorised by component, alongside the corresponding national failure rates for those very components. This direct comparison is what makes TQI so powerful. When interpreting this report, the primary objective is to look for any unusual differences in the data. For example, is a particular tester's average test duration significantly shorter or longer than the site or national average? Are failure rates for specific components notably higher or lower? These deviations are not necessarily indicative of wrongdoing but are flags that warrant further investigation. The process of scrutinising these differences and investigating any potential issues is fundamental to ensuring that all tests conducted at your VTS are consistent, thorough, and fully compliant with DVSA guidelines. It's about proactive quality control, not just reactive problem-solving.

Analysing Your TQI Report: Key Factors for Scrutiny

A superficial glance at your TQI report will yield little benefit. True value comes from a meticulous analysis, considering several critical factors that provide context to the raw numbers. Understanding these nuances is essential for drawing accurate conclusions and formulating effective improvement strategies.

- Average Vehicle Age: This is a crucial contextual factor. Older vehicles, by their very nature, are more prone to wear and tear and, consequently, more likely to fail an MOT. If your VTS consistently tests a fleet of older vehicles, a higher overall failure rate might be entirely justified and not indicative of poor testing standards. Conversely, if your average vehicle age is low but your failure rate is high, it could signal an issue.

- Test Duration: The time taken to complete an MOT test is a strong indicator of adherence to DVSA guidelines. Tests that are significantly too short might suggest a rushed inspection, potentially overlooking critical defects, which poses a serious road safety risk and compliance breach. On the other hand, tests that take excessively long could point to inefficiencies, interruptions, or a lack of familiarity with the vehicle type. Both extremes require investigation.

- Component Failure Rates: This is perhaps one of the most actionable insights TQI offers. The report breaks down failures by specific component categories (e.g., brakes, steering, suspension, lamps). If a particular tester shows a consistently low or high failure rate for a specific component compared to their peers or the national average, it could highlight an area where they might need additional training, a refresher on the MOT manual's criteria, or perhaps a different approach to inspection.

- National Averages: The national average serves as your primary benchmark. Comparing your VTS's overall performance, and that of individual testers, against these averages is vital. If your performance aligns closely with the national average, it generally indicates a consistent and compliant operation. Significant deviations, whether positive or negative, demand closer scrutiny.

By diligently analysing these factors, you gain a profound understanding of your testing standards. This analytical process is not just about identifying problems; it's about recognising strengths and areas for further development. Regularly reviewing and acting upon the insights gleaned from your TQI data is the cornerstone of maintaining high standards and providing reliable, compliant MOT tests to your valued customers. Furthermore, the ability to view detailed test logs for specific sites and testers, and to download the data as a CSV file for deeper analysis, empowers managers with an unparalleled level of insight, making your quality control checks robust and highly informative.

Comparative Analysis: Your VTS vs. The Nation

One of the most powerful features of the TQI report is its direct comparison of your testing statistics against both your site's average and the overarching national average. This comparative lens is incredibly useful for identifying emerging trends or anomalies that might otherwise go unnoticed. For instance, consider the following conceptual comparison table:

| Performance Metric | Your Tester's Average | Your Site's Average | National Average | Implication / Action Required |

|---|---|---|---|---|

| Average Test Duration | 40 mins | 45 mins | 45 mins | Slightly quicker than average. Investigate if thoroughness is maintained. |

| Overall Failure Rate | 32% | 35% | 33% | Consistent with national average. Good. |

| Brake System Failure Rate | 18% | 15% | 10% | Higher than national average. Review tester's brake inspection methods. |

| Visibility Failure Rate | 5% | 7% | 8% | Lower than national average. Check if all minor defects are being identified. |

| Average Vehicle Age | 8 years | 9 years | 7 years | Slightly older vehicles tested. Contextualises failure rates. |

If your site, or a particular team member, consistently compares favourably to the national average across all metrics, then your quality control is likely in a strong position. However, if you observe marked differences – especially significant deviations – between your data and the national average, it's a clear signal that proactive action is needed. This action could manifest in various forms, such as implementing additional, targeted training for specific testers in areas where they are struggling. Alternatively, it might involve temporary supervision or mentoring of your team members to provide direct support and guidance, ensuring they align with best practices and DVSA expectations. The goal is always to support your team in achieving and maintaining consistent, high-quality testing standards.

The report further categorises percentage failures by specific MOT categories, offering an even more granular view. These include:

- Body, chassis, structure

- Brakes

- Buses and coaches supplementary tests

- Identification of the vehicle

- Lamps, reflectors and electrical equipment

- Noise, emissions and leaks

- Road wheels

- Seat belt installation check

- Seat belts and supplementary restraint systems

- Speedometer and speed limiter

- Steering

- Suspension

- Tyres

- Visibility

This detailed breakdown allows managers to pinpoint exact areas of concern, enabling highly specific interventions rather than broad, unfocused training.

The Significance of Test Times: Too Short or Too Long?

One of the most scrutinised metrics within the TQI report is the average time taken to complete a test. While there isn't a rigid minimum or maximum test duration stipulated by the DVSA, a significant deviation from the national average test times can raise eyebrows and warrants immediate investigation. Why do times matter so much? Because if a test time isn’t falling reasonably close to the national average, it strongly suggests that the tests might not be being completed in full accordance with DVSA guidelines, potentially compromising road safety or operational efficiency.

If your tester is completing tests far quicker than the average, several critical questions need to be asked:

- Has a thorough inspection been completed? Rushing through an MOT test significantly increases the risk of missing critical defects that could pose a danger on the road. A comprehensive inspection requires a certain amount of time to meticulously check every component.

- Is the tester’s workload too heavy, leading to a feeling of needing to rush? High pressure and unrealistic targets can inadvertently encourage testers to cut corners. It’s crucial to ensure your testers have a manageable workload that allows for proper adherence to procedures.

- Are all vehicles serviced before they are MOT tested? While a service can address many issues, it shouldn't be relied upon as a substitute for a full, independent MOT test. A quick test after a service might imply less scrutiny, assuming the service has fixed everything.

Conversely, if tests are consistently taking too long, a different set of questions arises:

- Was the test being interrupted by customers or other staff needing information or technical assistance? This is a BIG no-no with the DVSA. An MOT test must be conducted without interruption to ensure the tester’s full focus is on the vehicle and safety checks.

- Is the vehicle unfamiliar to the MOT tester? Perhaps it's a make or model they rarely encounter, leading to more time spent consulting the manual or understanding specific components. This can indicate a need for broader training.

- Did they leave the vehicle unattended or forget to complete the test for any reason? This is another significant red flag for the DVSA. An MOT test must be conducted continuously by the same tester from start to finish.

Addressing these questions is vital for maintaining compliance, ensuring safety, and optimising the efficiency of your VTS operations.

Addressing Discrepancies in Failure Rates

When your TQI report identifies that one or more of your testers' results are not in sync with the national average failure rates, it's a strong indicator that further inquiry is needed. As a manager, you should delve into the potential reasons behind these discrepancies. This is not about assigning blame but understanding the context and providing the necessary support.

Consider the following critical questions:

- Have the vehicles been serviced before the MOT? As mentioned previously, if a high proportion of vehicles arriving for MOT have just undergone a comprehensive service, it might naturally lead to a lower failure rate. This isn't necessarily a negative, but it provides context to the data.

- Is the tester up to date with the current failure criteria as laid down in the current MOT Manual? The MOT Manual is a "live" document, constantly updated by the DVSA to reflect changes in regulations, vehicle technology, and safety standards. A tester who isn't regularly consulting this document might be working with outdated information, leading to incorrect pass/fail decisions. Regular refreshers and mandatory continuous professional development (CPD) are crucial.

- Does the tester refer to the Manual regularly? Beyond just knowing the criteria, actively using the manual during ambiguous situations is a sign of a diligent tester. Encouraging its regular use as a reference tool is paramount.

- Do they use PRS (Partial Re-examination Service) properly? The correct use of PRS, particularly for re-tests, is essential. Misuse or misunderstanding can affect recorded failure rates and compliance.

It's important to note that a tester's pass rate being higher or lower than the site or national average does not automatically mean anything is wrong with their testing standard. For example, a dealership specialising in new vehicles might naturally have a much lower failure rate. Conversely, a garage specialising in repairing older, more problematic vehicles might see a higher failure rate. For testing stations with numerous MOT testers, especially large dealerships or specialist service centres, comparing against site averages is as important as comparing to national averages, as it helps identify internal inconsistencies. You and your MOT testers should regularly review this data, investigate any unusual differences, document the findings, and record the outcome of your investigations. This rigorous process demonstrates due diligence and a commitment to quality control.

Actionable Steps for Managers: Transforming Data into Improvement

As the manager of an MOT testing station, identifying anomalies within your TQI reports is only the first step. The true value lies in taking decisive, necessary action based on those insights. The information provided by TQI is a powerful resource; it's there to help you! To ensure you're consistently leveraging this tool for the benefit of your VTS and your team, establish a clear, repeatable process. Make a checklist so you always:

- Get into the habit of using the TQI Report as part of your regular Quality Control (QC) process. This should not be an infrequent, reactive task but an embedded, proactive element of your monthly operational review. Schedule dedicated time for it.

- Always discuss findings with the MOT tester so you can offer support where it’s needed. This conversation should be constructive and supportive, not accusatory. Frame it as an opportunity for professional development and shared responsibility for maintaining high standards. Use the data to highlight specific areas for improvement, and collaboratively agree on a path forward.

- Make detailed notes on the TQI Report before filing it in an MOT compliance folder. Documenting your observations, discussions with testers, and any agreed-upon actions is crucial. This creates an auditable trail, demonstrating your commitment to compliance and continuous improvement, which can be invaluable during DVSA visits.

- Create an action plan where necessary. If discrepancies are identified, formalise a plan. This might include specific training modules, a period of supervised testing, a refresher on certain sections of the MOT manual, or even adjustments to workload to prevent rushing. Assign responsibilities and set timelines for review.

- Review the report EVERY month. Consistency is key. Monthly review ensures that issues are identified early, before they become ingrained problems, and allows you to track the effectiveness of your action plans over time.

Combining TQI report analysis with detailed test logs can make your VTS's QC checks incredibly robust and informative, providing a holistic view of performance. This integrated approach ensures that your station remains compliant, efficient, and reputable, delivering high-quality MOT tests that contribute to road safety across the UK.

If you want to read more articles similar to Mastering MOT Compliance: Your TQI Guide, you can visit the MOT category.