15/11/2000

In the rapidly evolving landscape of autonomous systems, from self-driving cars navigating bustling city streets to sophisticated robotic systems operating in complex environments, the ability to accurately perceive and track dynamic objects is not merely an advantage but a fundamental necessity. Imagine a self-driving car needing to distinguish between a pedestrian, a cyclist, or even an unexpectedly fallen object on the road. This complex task falls under the purview of Multiple Object Tracking (MOT), a critical area of computer vision research. Historically, traditional MOT benchmarks have been designed with a significant limitation: they typically evaluate tracking performance against a very small, pre-defined set of object categories. While effective for their specific scope, this narrow focus hardly scratches the surface of the myriad of objects encountered in the unpredictable real-world applications. This inherent constraint means that contemporary MOT methods, despite their advancements, remain largely confined to identifying and tracking only those objects they were explicitly trained for. But what if a system could track any object, even those it has never encountered during its training phase? This is precisely the ambitious challenge addressed by the groundbreaking concept of open-vocabulary MOT.

- What is Multiple Object Tracking (MOT)?

- The Limitations of Traditional MOT Benchmarks

- Introducing Open-Vocabulary MOT

- OVTrack: A Pioneering Solution for Open-Vocabulary Tracking

- How OVTrack Works

- Key Benchmarks and Outstanding Results

- Why Open-Vocabulary MOT Matters for the Future

- Frequently Asked Questions (FAQs)

- Conclusion

What is Multiple Object Tracking (MOT)?

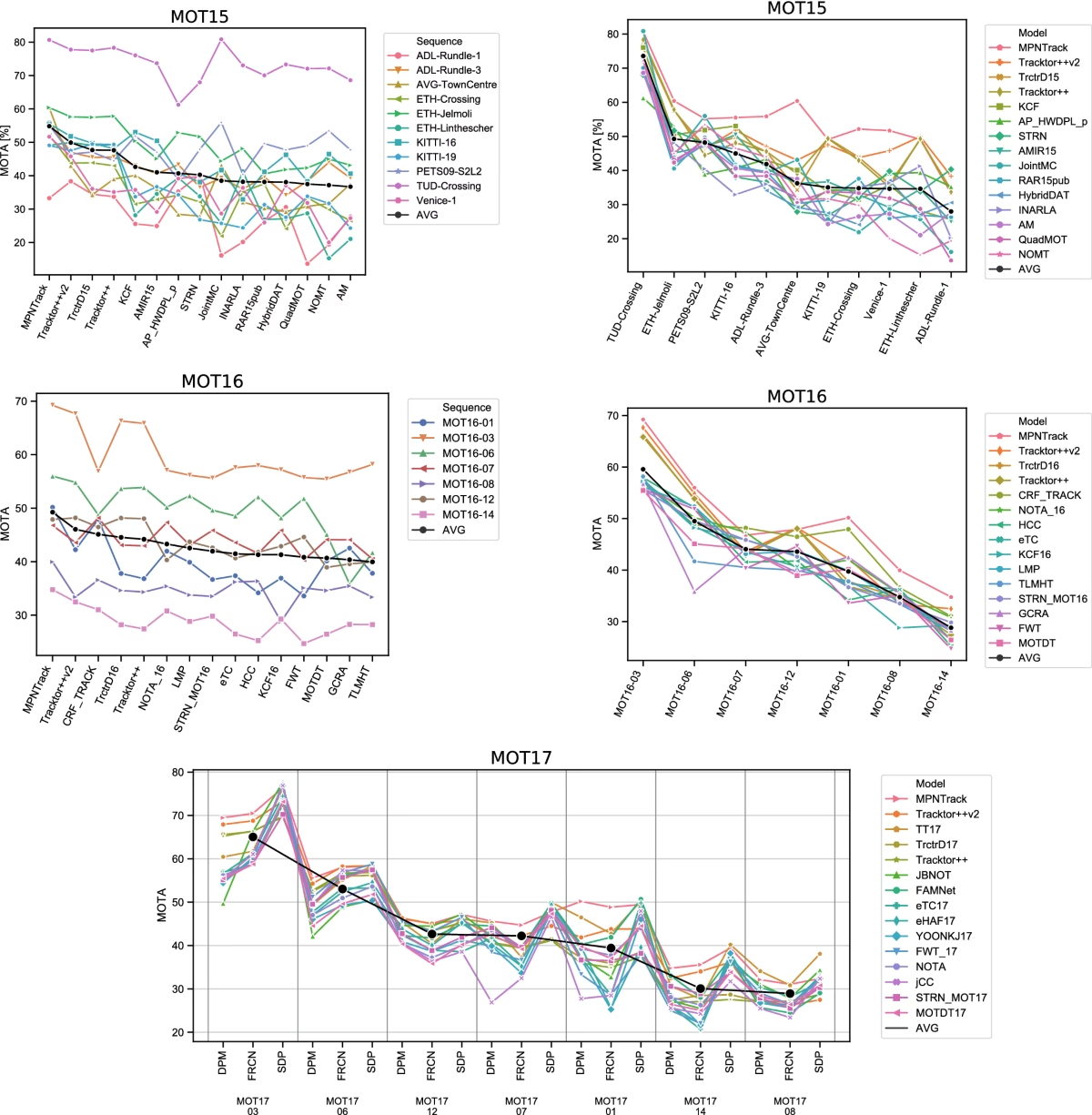

At its core, Multiple Object Tracking (MOT) is the computational problem of identifying and following multiple objects over time within a sequence of images or video frames. It involves two primary sub-tasks: detection, which locates objects in each frame, and association, which links detections of the same object across different frames to form a continuous trajectory. A robust MOT system can maintain the identity of various objects, even when they temporarily disappear from view due to occlusions or camera movements. Traditional MOT benchmarks, such as MOT17 or MOT20, typically focus on a limited set of common categories like 'pedestrians' or 'vehicles'. While these benchmarks have been instrumental in driving advancements in tracking algorithms, their narrow scope presents a significant hurdle for deployment in truly dynamic and diverse environments.

The Limitations of Traditional MOT Benchmarks

The reliance on a few pre-defined object categories in conventional MOT benchmarks creates a substantial bottleneck for real-world deployment. Consider an autonomous vehicle that encounters an unusual construction cone, a fallen tree branch, or a stray animal – objects that might not have been part of its training dataset. A system limited by pre-defined categories would either fail to detect or track these novel objects, leading to potentially hazardous situations. This limitation stems from the fact that most traditional MOT methods are trained on datasets with a fixed, closed vocabulary of object classes. While they excel at tracking 'cars' or 'people', their performance drastically degrades when faced with 'unseen' or 'novel' categories. This highlights a crucial gap between laboratory-based performance and the demands of truly generalisable, adaptable AI systems.

Introducing Open-Vocabulary MOT

To bridge this critical gap, researchers have introduced a novel task: open-vocabulary MOT. This paradigm shift aims to evaluate and develop tracking systems that can go 'beyond pre-defined training categories.' In essence, an open-vocabulary tracker should be capable of recognising, localising, and tracking arbitrary object classes, including those it has never explicitly seen during its training phase. This capability is paramount for creating truly robust and adaptable AI systems, particularly in fields like self-driving cars, robotics, and surveillance, where the environment is inherently unpredictable and dynamic. The ability to handle an 'open vocabulary' of objects means a system can adapt to new scenarios without requiring extensive re-training for every single new object type it might encounter. This represents a significant leap forward from the constrained world of closed-set object recognition and tracking.

OVTrack: A Pioneering Solution for Open-Vocabulary Tracking

Among the forefront solutions addressing this challenge is OVTrack, an open-vocabulary tracker presented at CVPR 2023. OVTrack stands out for its innovative design, which enables it to track arbitrary object classes with remarkable efficiency. Its success hinges on two pivotal ingredients that redefine how tracking systems can learn and adapt:

1. Leveraging Vision-Language Models for Classification and Association via Knowledge Distillation

OVTrack cleverly integrates vision-language models (VL models). These powerful models are trained on vast amounts of image-text pairs, allowing them to understand the semantic relationship between visual content and textual descriptions. OVTrack uses these VL models not only for classifying objects (determining what an object is) but also for associating detections across frames (recognising that two detections belong to the same object). This is achieved through a process called knowledge distillation, where the comprehensive understanding of the VL model is transferred to a more compact, task-specific tracker. This allows OVTrack to leverage the rich, generalisable knowledge embedded within large VL models, enabling it to infer and track novel categories.

2. A Data Hallucination Strategy for Robust Appearance Feature Learning from Denoising Diffusion Probabilistic Models

To further enhance its ability to handle novel objects, OVTrack employs a unique data hallucination strategy. This involves utilising denoising diffusion probabilistic models (DDPMs) to generate synthetic, yet realistic, training data. By hallucinating diverse appearances for various objects, OVTrack can learn robust appearance features that are not limited by the specific instances seen in real datasets. This technique is particularly effective because it allows the tracker to acquire a broader understanding of object variations, making it exceptionally data-efficient. Remarkably, OVTrack achieves its state-of-the-art performance while being trained solely on static images, circumventing the need for vast amounts of expensive video-based tracking data.

How OVTrack Works

OVTrack's operational framework during both training and testing phases demonstrates its innovative use of VL models:

- During Training: OVTrack leverages VL models for two main purposes: generating synthetic samples to augment its training data (data hallucination) and for knowledge distillation. This distillation process transfers the sophisticated object understanding from the large VL model to OVTrack's core tracking architecture.

- During Testing: When presented with new scenes, OVTrack can track both 'base classes' (objects it has seen during training) and 'novel classes' (objects it has never explicitly encountered). It achieves this by dynamically querying a vision-language model, allowing it to adapt its understanding to new object descriptions on the fly.

Key Benchmarks and Outstanding Results

OVTrack's effectiveness has been rigorously validated across several prominent benchmarks, consistently outperforming existing state-of-the-art methods. These benchmarks are crucial for objectively comparing the performance of different MOT algorithms.

- TAO Benchmark: The TAO benchmark (Tracking Any Object) is particularly significant as it is a large-scale, large-vocabulary dataset designed to push the boundaries of object tracking beyond a limited number of categories. OVTrack sets a new state-of-the-art on this challenging benchmark.

- BDD100K MOT and MOTS Benchmarks: BDD100K is another widely recognised dataset, especially pertinent for autonomous driving research. OVTrack demonstrates superior performance here as well, underscoring its applicability in real-world automotive scenarios.

- TETA Benchmark: This benchmark is specifically designed to facilitate open-vocabulary MOT evaluation. OVTrack's results on TETA highlight its strengths in handling novel categories.

Let's examine some of the reported main results:

TETA Benchmark Performance (Higher is Better)

| Method | Backbone | Pretrain | LocA | AssocA | ClsA |

|---|---|---|---|---|---|

| QDTrack (CVPR21) | ResNet-101 | ImageNet-1K | 30.0 | 50.5 | 27.4 |

| TETer | ResNet-101 | ImageNet-1K | 33.3 | 51.6 | 35.0 |

| OVTrack | ResNet-50 | ImageNet-1K | 34.7 | 49.3 | 36.7 |

| OVTrack (dynamic rcnn threshold) | ResNet-50 | ImageNet-1K | 36.2 | 53.8 | 37.3 |

Note: LocA (Localisation Accuracy), AssocA (Association Accuracy), and ClsA (Classification Accuracy) are key metrics for evaluating tracking performance. OVTrack consistently shows competitive or superior results, especially when considering its efficiency with a ResNet-50 backbone. The 'dynamic rcnn threshold' variant dynamically adjusts the R-CNN score threshold based on the number of classes to track, demonstrating an adaptive inference strategy.

TAO Benchmark Performance (Higher is Better)

| Method | Backbone | Track AP50 | Track AP75 | Track AP |

|---|---|---|---|---|

| SORT-TAO (ECCV 20) | ResNet-101 | 13.2 | - | - |

| QDTrack (CVPR21) | ResNet-101 | 15.9 | 5 | 10.6 |

| GTR (CVPR 2022) | ResNet-101 | 20.4 | - | - |

| TAC (ECCV 2022) | ResNet-101 | 17.7 | 5.8 | 7.3 |

| BIV (ECCV 2022) | ResNet-101 | 19.6 | 7.3 | 13.6 |

| OVTrack | ResNet-50 | 21.2 | 10.6 | 15.9 |

Note: Track AP (Average Precision) at different Intersection over Union (IoU) thresholds (50, 75, and overall AP) measures the accuracy of tracking. OVTrack, even with a lighter ResNet-50 backbone, surpasses previous state-of-the-art models like GTR and BIV, showcasing its efficiency and superior performance on this large-vocabulary dataset.

Open-Vocabulary Results (Validation Set) - Higher is Better

| Method | Base Classes | Novel Data | LVIS Data | TAO Base | TETA Novel |

|---|---|---|---|---|---|

| QDTrack | ✓ | ✓ | ✓ | 27.1 | 22.5 |

| TETer | ✓ | ✓ | ✓ | 30.3 | 25.7 |

| DeepSORT (ViLD) | ✓ | ✓ | ✓ | 26.9 | 21.1 |

| Tracktor++ (ViLD) | ✓ | ✓ | ✓ | 28.3 | 22.7 |

| OVTrack | ✓ | ✓ | 35.5 | 27.8 | |

| OVTrack (dynamic rcnn threshold) | ✓ | ✓ | 37.1 | 28.8 |

Note: This table specifically highlights performance on base (seen during training) and novel (unseen) classes across different datasets (LVIS, TAO Base, TETA Novel). OVTrack consistently demonstrates superior performance on both base and novel categories, particularly noticeable in the 'TETA Novel' column, which directly measures its open-vocabulary capability. The dynamic R-CNN threshold further boosts performance, showcasing an adaptable inference strategy without altering the core model.

Why Open-Vocabulary MOT Matters for the Future

The emergence of open-vocabulary MOT benchmarks and solutions like OVTrack marks a pivotal moment in the development of truly intelligent autonomous systems. By moving beyond the limitations of pre-defined categories, these advancements pave the way for systems that are more robust, adaptable, and safer in unpredictable real-world environments. Imagine a future where a robot can track any tool it's asked to fetch, or a surveillance system can monitor any unusual object activity, regardless of whether it was explicitly programmed to recognise that specific object. This shift reduces the need for constant re-training with new data for every new object type, significantly cutting down development costs and accelerating deployment timelines. It fosters a more generalisable form of artificial intelligence, capable of learning and adapting much like humans do, by inferring and understanding novel concepts from existing knowledge.

Frequently Asked Questions (FAQs)

- What is the primary limitation of traditional Multiple Object Tracking (MOT) benchmarks?

- Traditional MOT benchmarks typically rely on a small, pre-defined set of object categories, limiting the ability of tracking systems to perform effectively on novel or unseen objects encountered in diverse real-world scenarios.

- What is Open-Vocabulary Multiple Object Tracking?

- Open-vocabulary MOT is a novel task that aims to evaluate and develop tracking systems capable of recognising, localising, and tracking arbitrary object classes, including those not seen during their initial training. This allows for greater adaptability in unpredictable environments.

- How does OVTrack achieve its open-vocabulary capability?

- OVTrack achieves this through two key ingredients: leveraging vision-language models for both classification and association via knowledge distillation, and employing a data hallucination strategy using denoising diffusion probabilistic models to learn robust appearance features from static images.

- What are Vision-Language (VL) Models in the context of OVTrack?

- VL models are powerful AI models trained on vast amounts of image-text data, enabling them to understand the semantic connections between visual content and linguistic descriptions. OVTrack uses these models to transfer broad object knowledge for tracking both seen and unseen categories.

- What is 'knowledge distillation' as used by OVTrack?

- Knowledge distillation is a technique where the comprehensive knowledge learned by a large, powerful model (in this case, a vision-language model) is transferred to a smaller, more efficient model (OVTrack's core tracking architecture). This allows the smaller model to inherit the generalisation capabilities of the larger one.

- Which benchmarks are used to evaluate OVTrack's performance?

- OVTrack's performance is evaluated on several large-scale, large-vocabulary benchmarks, including the TAO benchmark, BDD100K MOT and MOTS benchmarks, and the TETA benchmark, which is specifically designed for open-vocabulary MOT evaluation.

Conclusion

The journey towards truly intelligent and autonomous systems is punctuated by significant breakthroughs, and the development of open-vocabulary MOT marks one such pivotal moment. By addressing the critical limitation of traditional tracking methods, solutions like OVTrack are not just improving tracking accuracy; they are fundamentally changing how AI systems perceive and interact with the dynamic, unpredictable world around them. With its innovative use of vision-language models and data hallucination, OVTrack has demonstrated a remarkable ability to track arbitrary objects, setting new benchmarks and paving the way for a future where autonomous vehicles, robots, and smart surveillance systems can operate with unprecedented levels of awareness and adaptability. This research underscores the ongoing commitment to creating AI that is not only powerful but also truly versatile and robust in the face of real-world complexity.

If you want to read more articles similar to Demystifying Open-Vocabulary MOT Benchmarks, you can visit the Automotive category.