01/01/2013

When delving into the fascinating realm of multi-object tracking (MOT) research, understanding and correctly utilising benchmark datasets is absolutely paramount. A common query among researchers revolves around the relationship between the MOT16 and MOT17 datasets, specifically whether the data from MOT16 can be effectively reused within the MOT17 framework. The short answer is yes, but with critical caveats that demand a thorough understanding of the significant enhancements introduced in MOT17. This guide aims to clarify the nuances of these datasets, detailing how to leverage their interconnectedness for your tracking projects while ensuring data integrity.

- Understanding the MOT Datasets: Evolution and Purpose

- The Crucial Enhancements in MOT17: Ground Truth and Detections

- Reusing MOT16 Frames: The Practical Approach

- Deep Dive into MOT17 Dataset Characteristics

- Best Practices for Utilising MOT17 Data

- Frequently Asked Questions About MOT16 & MOT17 Data

- Conclusion

Understanding the MOT Datasets: Evolution and Purpose

The MOT Challenge provides a standardised platform for evaluating multi-object tracking algorithms. These datasets are fundamental, offering video sequences with annotated bounding boxes and unique identities for each tracked object, known as ground truth. This allows researchers to quantitatively assess the performance of their tracking models against a reliable benchmark.

MOT16 served as a foundational dataset, establishing a set of challenging sequences for the community. However, as research progressed, the need for more accurate and robust ground truth became apparent. This led to the development of MOT17, which, rather than introducing entirely new video sequences, intelligently builds upon its predecessor.

The key innovation in MOT17 is its commitment to providing a more accurate ground truth for all the sequences originally featured in MOT16. This means that while the raw video frames remain identical to their MOT16 counterparts, the precise annotations of object locations and identities have been meticulously refined. This refinement is crucial for fair and accurate evaluation of tracking algorithms, as even minor inaccuracies in ground truth can significantly skew performance metrics.

The Crucial Enhancements in MOT17: Ground Truth and Detections

The primary distinction between MOT16 and MOT17 lies in two critical areas: the ground truth annotations and the provision of multiple detection sets.

Refined Ground Truth: The Backbone of Evaluation

As mentioned, MOT17 features a new, more accurate ground truth for every sequence previously seen in MOT16. This isn't just a minor update; it's a fundamental improvement that ensures the benchmark is as reliable as possible. For any serious multi-object tracking research, relying on this refined ground truth is essential to obtain valid and comparable results. Using the older MOT16 ground truth with MOT17 sequences would lead to inaccurate evaluations of your tracking algorithm's performance.

Diverse Detection Sets: A Realistic Challenge

Beyond the improved ground truth, MOT17 significantly enhances the dataset by providing three distinct sets of detections for each sequence. These detections are generated by different object detection algorithms, offering varying levels of quality and characteristics. This is incredibly valuable for researchers, as it allows for the evaluation of tracking algorithms under more realistic and diverse conditions. Tracking algorithms rarely operate on perfect detections; they must be robust to the output of real-world detectors. The three detection sets provided are:

- DPM (Deformable Part Model): Represents an older, yet still relevant, class of object detectors. Detections from DPM often exhibit lower recall and precision compared to modern neural network-based approaches.

- Faster-RCNN (Region-based Convolutional Neural Network): A widely recognised and powerful deep learning-based object detector. Faster-RCNN detections are generally more accurate and robust than DPM.

- SDP (Soft-NMS based Detector): A detector that often provides high-quality detections, particularly in crowded scenes, by employing Soft-NMS (Non-Maximum Suppression) for better handling of overlapping bounding boxes.

The inclusion of these varied detection sets allows researchers to:

- Assess the robustness of their tracking algorithms to different detector performance levels.

- Understand how their tracker performs when presented with varying qualities of input detections.

- Compare their algorithm's performance fairly against others using the exact same detection inputs.

Reusing MOT16 Frames: The Practical Approach

The prompt asks directly: "Can I re-use MOT16 & MOT17 data?" The answer, specifically concerning the raw video frames, is a resounding yes. The official MOT17 download information explicitly states: "Note that the data contains the same set of sequences (frames) as MOT16 three times." This means you do not need to download the video frames separately if you already possess the MOT16 dataset.

For convenience, you can download the entire MOT17 data package, which will extract into the correct folder structure, including all the new ground truth and detection files alongside the video sequences. Alternatively, if you wish to conserve bandwidth or have existing MOT16 sequences stored locally, you absolutely "may re-use the MOT16 sequences (frames) locally."

However, and this is a critical point, reusing the frames does not imply reusing the associated annotations or detections from MOT16. The statement "Important: Both the ground truth and the detection set is new for MOT17!" cannot be overstated. When working with MOT17, you must ensure that you are using the corresponding MOT17 ground truth files and the specific MOT17 detection files (SDP, FRCNN, or DPM) for your chosen sequences. Failing to do so will invalidate your experimental results.

Deep Dive into MOT17 Dataset Characteristics

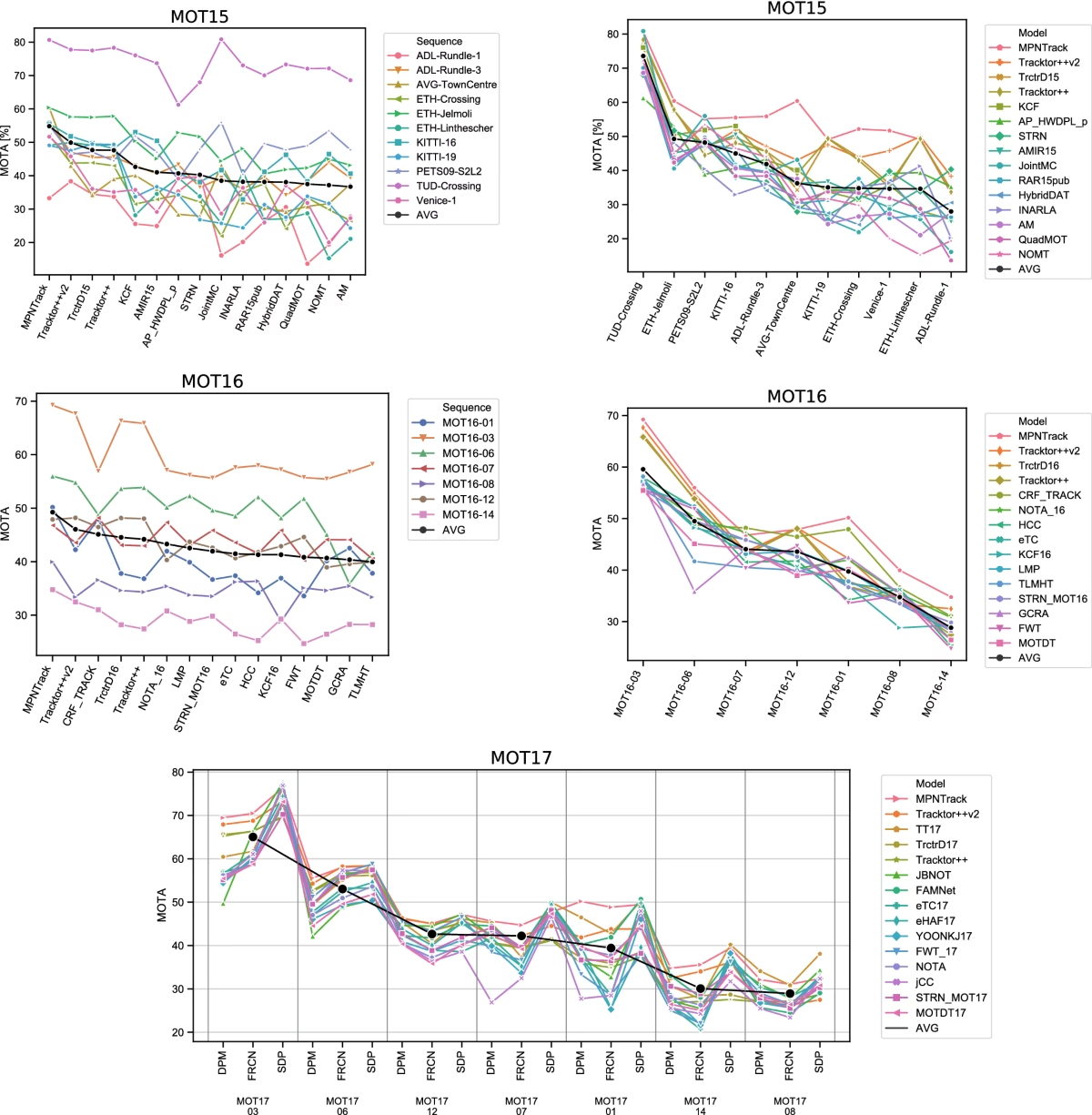

Let's examine the detailed specifications of the MOT17 training and test sets provided, offering a clearer picture of the diverse scenarios covered.

MOT17 Training Set Overview

The training set comprises a variety of scenes, offering a rich environment for developing and refining tracking algorithms. Each sequence is provided with the three detector outputs, leading to multiple entries for what is essentially the same video sequence.

| Sample Name | FPS | Resolution | Length (Frames) | Tracks | Boxes | Density (Boxes/Frame) | Description |

|---|---|---|---|---|---|---|---|

| MOT17-13-SDP/FRCNN/DPM | 25 | 1920x1080 | 750 | 110 | 11642 | 15.5 | Filmed from a bus on a busy intersection. |

| MOT17-11-SDP/FRCNN/DPM | 30 | 1920x1080 | 900 | 75 | 9436 | 10.5 | Forward moving camera in a busy shopping mall. |

| MOT17-10-SDP/FRCNN/DPM | 30 | 1920x1080 | 654 | 57 | 12839 | 19.6 | A pedestrian scene filmed at night by a moving camera. |

| MOT17-09-SDP/FRCNN/DPM | 30 | 1920x1080 | 525 | 26 | 5325 | 10.1 | A pedestrian street scene filmed from a low angle. |

| MOT17-05-SDP/FRCNN/DPM | 14 | 640x480 | 837 | 133 | 6917 | 8.3 | Street scene from a moving platform. |

| MOT17-04-SDP/FRCNN/DPM | 30 | 1920x1080 | 1050 | 83 | 47557 | 45.3 | Pedestrian street at night, elevated viewpoint. |

| MOT17-02-SDP/FRCNN/DPM | 30 | 1920x1080 | 600 | 62 | 18581 | 31.0 | People walking around a large square. |

The training set offers a good mix of scenarios:

- Resolution & FPS: Predominantly high-resolution (1920x1080) at 30 FPS, with one sequence (MOT17-05) at lower resolution (640x480) and 14 FPS, providing variability.

- Scene Diversity: From busy intersections (MOT17-13) and shopping malls (MOT17-11) to night scenes (MOT17-10, MOT17-04) and moving camera perspectives (MOT17-05, MOT17-10), the set challenges trackers in various real-world conditions.

- Density: Box density, representing the average number of detected objects per frame, varies significantly. MOT17-04-SDP stands out with a very high density of 45.3 boxes/frame, indicating a particularly crowded scene from an elevated viewpoint at night. This sequence, along with MOT17-02-SDP (31.0 boxes/frame), presents substantial challenges for association and occlusion handling.

- Total Data: The training set encompasses a total of 15,948 frames and over 336,000 ground truth boxes across all detector permutations, providing a robust foundation for model training.

MOT17 Test Set Overview

The test set follows a similar structure, featuring sequences that are distinct from the training set but cover comparable challenging conditions. This ensures that models are evaluated on unseen data, reflecting their generalisation capabilities.

| Sample Name | FPS | Resolution | Length (Frames) | Tracks | Boxes | Density (Boxes/Frame) | Description |

|---|---|---|---|---|---|---|---|

| MOT17-14-SDP/FRCNN/DPM | 25 | 1920x1080 | 750 | 164 | 18483 | 24.6 | Filmed from a bus on a busy intersection. |

| MOT17-12-SDP/FRCNN/DPM | 30 | 1920x1080 | 900 | 91 | 8667 | 9.6 | Forward moving camera in a busy shopping mall. |

| MOT17-08-SDP/FRCNN/DPM | 30 | 1920x1080 | 625 | 76 | 21124 | 33.8 | A crowded pedestrian street, stationary camera. |

| MOT17-07-SDP/FRCNN/DPM | 30 | 1920x1080 | 500 | 60 | 16893 | 33.8 | A busy pedestrian street filmed at eye level by a moving camera. |

| MOT17-06-SDP/FRCNN/DPM | 14 | 640x480 | 1194 | 222 | 11784 | 9.9 | Street scene from a moving platform. |

| MOT17-03-SDP/FRCNN/DPM | 30 | 1920x1080 | 1500 | 148 | 104675 | 69.8 | Pedestrian street at night, elevated viewpoint. |

| MOT17-01-SDP/FRCNN/DPM | 30 | 1920x1080 | 450 | 24 | 6450 | 14.3 | People walking around a large square. |

Key observations from the test set:

- Increased Density: The test set features sequences with even higher densities. MOT17-03-SDP, for instance, boasts an extremely high density of 69.8 boxes/frame, making it one of the most challenging sequences for tracking due to severe occlusions and numerous interactions. This is a significant increase from the training set's highest density.

- Longer Sequences: MOT17-03-SDP is also notably longer at 1500 frames (50 seconds), providing extended tracking challenges. MOT17-06-SDP is also quite long at 1194 frames.

- Diverse Camera Motions: Similar to the training set, the test set includes varied camera perspectives, from stationary (MOT17-08) to moving (MOT17-07, MOT17-06), ensuring comprehensive evaluation.

- Total Data: The test set offers 17,757 frames and over 564,000 ground truth boxes, making it a substantial and challenging benchmark for final evaluation.

The overall total frames and boxes across both training and test sets highlight the sheer volume and complexity of data available in MOT17, providing a robust environment for developing and testing advanced tracking algorithms.

Best Practices for Utilising MOT17 Data

To ensure your research is robust and your results are comparable with other works in the field, adhere to these best practices when using MOT17:

- Always Use MOT17 Ground Truth: Regardless of whether you reuse MOT16 frames or download the full MOT17 package, the new, accurate ground truth provided with MOT17 is indispensable for evaluation. Do not use MOT16 ground truth with MOT17 challenges.

- Specify Detection Source: When reporting results, clearly state which detection set (DPM, Faster-RCNN, or SDP) your tracking algorithm was evaluated on. This is crucial for reproducibility and fair comparison, as tracker performance is highly dependent on detector quality.

- Understand Data Organisation: The MOT17 dataset typically organises sequences by detector within subfolders (e.g.,

MOT17-02-DPM,MOT17-02-FRCNN,MOT17-02-SDP). Ensure your data loading scripts correctly access the desired detections and the unified ground truth files. - Consider Detector Impact: When designing or evaluating your tracker, consider how different detection qualities might affect its performance. A robust tracker should ideally perform well across all three detection sets, although performance metrics will naturally vary.

Frequently Asked Questions About MOT16 & MOT17 Data

- Is MOT17 simply MOT16 with new labels?

- Essentially, yes, for the video sequences themselves. MOT17 re-uses all the video frames from MOT16 but provides a new, more accurate ground truth and three different sets of object detections (DPM, Faster-RCNN, and SDP), which are also new to MOT17.

- Can I use MOT16 detections with MOT17 ground truth?

- No. While the frames are the same, the challenge of MOT17 is based on its new ground truth and its specific sets of detections. Using MOT16 detections would mean you are not evaluating your tracker under the conditions of the MOT17 benchmark, making your results incomparable.

- What do DPM, Faster-RCNN, and SDP stand for?

- These are names of different object detection algorithms:

- DPM: Deformable Part Model, an older but robust detection method.

- Faster-RCNN: Faster Region-based Convolutional Neural Network, a popular and powerful deep learning object detector.

- SDP: A detector often associated with Soft-NMS (Non-Maximum Suppression) for improved handling of overlapping detections.

They represent different levels of detection quality and characteristics, allowing for comprehensive tracker evaluation.

- Why are there so many entries for the same sequence name in the data tables?

- Each sequence (e.g., MOT17-13) is listed three times in the dataset due to the provision of three different detection sets: SDP, Faster-RCNN (FRCNN), and DPM. While the underlying video frames and ground truth for MOT17-13 are consistent, the input detections provided for your tracker will differ depending on whether you choose MOT17-13-SDP, MOT17-13-FRCNN, or MOT17-13-DPM.

- What is the primary benefit of the new ground truth in MOT17?

- The primary benefit is improved accuracy and consistency. A more accurate ground truth ensures that tracking algorithms are evaluated against a reliable benchmark, leading to more meaningful comparisons and a clearer understanding of a tracker's true performance. It reduces noise and potential errors in evaluation metrics.

Conclusion

In summary, the question of reusing MOT16 data for MOT17 is nuanced. While the underlying video sequences (frames) are indeed the same and can be physically reused to save download time, it is absolutely imperative to use the new ground truth and the specific detection sets provided for MOT17. This distinction is critical for ensuring the validity and comparability of your research results within the MOT Challenge framework. By understanding the evolutionary enhancements in MOT17, particularly the refined annotations and diverse detection inputs, researchers can leverage these powerful datasets to develop and rigorously evaluate state-of-the-art multi-object tracking algorithms.

If you want to read more articles similar to Reusing MOT16 & MOT17 Data: A Comprehensive Guide, you can visit the Automotive category.