12/06/2025

Optimising application performance is a perennial challenge for developers, and pinpointing the exact cause of slowdowns can often feel like searching for a needle in a haystack. Fortunately, Visual Studio 2022, with its latest enhancements, offers a powerful solution. The new and improved Instrumentation Tool, released with Visual Studio 2022 version 17.6, provides unparalleled insight into your application's runtime behaviour. Unlike its CPU Usage counterpart, this tool delivers exact timing and call counts, making it an indispensable asset for identifying blocked time, understanding average function execution, and ultimately, dramatically improving your code's efficiency. Let's delve into how this remarkable tool can transform your performance profiling efforts and help you build faster, more responsive applications.

- What's New with the Visual Studio Instrumentation Tool?

- Instrumentation Tool vs. CPU Usage Tool: A Comparison

- Getting Started: Instrumenting Your Application

- Analysing the Instrumentation Report

- Case Study: Mining for Performance Improvements

- Understanding Async Calls in the Instrumentation Call Tree (.NET)

- Frequently Asked Questions (FAQs)

- Conclusion

What's New with the Visual Studio Instrumentation Tool?

For those familiar with Visual Studio's profiling capabilities, the term "Instrumentation Tool" might ring a bell. Indeed, Visual Studio has long featured an instrumentation option. However, the iteration introduced in Visual Studio 2022 version 17.6 (and dynamically available from version 17.5) represents a significant leap forward, offering a suite of enhancements designed to make performance analysis more efficient and insightful than ever before. If you've previously used instrumentation, prepare for a vastly improved experience.

Here’s a breakdown of the key improvements:

- Significantly Faster and Less Resource-Intensive: The new tool boasts a substantial improvement in performance, running much quicker and consuming considerably less disk space during profiling. This means you can gather data on larger, more complex applications without the overhead bogging down your development machine.

- Enhanced Targeting for .NET Applications: For .NET developers, the tool now offers superior targeting capabilities. You can precisely scope your instrumentation down to specific functions, which drastically reduces overhead and yields more pertinent, focused data. This granular control ensures you're only profiling what truly matters.

- Introducing the Flame Graph: A visual game-changer, the new Flame Graph provides an intuitive, graphical representation of where your application spends the most time. This hierarchical visualisation allows you to quickly identify "hot paths" – the most expensive code paths – and narrow down areas ripe for optimisation with unprecedented ease. It's an excellent way to see your entire call stack at a glance and spot bottlenecks.

- Better Error Handling: The tool now comes with improved error detection and guidance, assisting with common issues such as missing the

/profilerlinker flag for C++ projects. Should you encounter persistent problems, the Developer Community is ready to provide support.

Instrumentation Tool vs. CPU Usage Tool: A Comparison

Understanding the distinction between the Instrumentation Tool and the CPU Usage Tool is crucial for effective performance profiling. While both aim to identify performance bottlenecks, they do so using different methodologies and provide distinct types of insights.

Here's a comparative overview:

| Feature/Characteristic | Instrumentation Tool (New) | CPU Usage Tool |

|---|---|---|

| Data Collection Method | Instrumentation (exact timing) | Sampling (statistical approximation) |

| Timing Basis | Wall Clock Time | CPU Utilisation Time |

| Precision | Exact call counts and timings | Approximate (based on samples) |

| Blocked Time Visibility | Yes (e.g., waiting for locks, I/O) | No (only active CPU work) |

| Overhead | Generally higher than sampling (though improved in new version) | Generally lower |

| Best Use Case | Pinpointing exact bottlenecks, contention, I/O issues, average function time. | Getting a general idea of CPU-bound issues, quick overview of hot spots. |

| Supported Languages | .NET (C#, VB, F#), C++ (v17.6 Preview 2+) | .NET, C++, JavaScript, Python |

The key takeaway is that the Instrumentation Tool excels at revealing non-CPU bound issues, such as threads waiting on locks, I/O operations, or network delays. Because it measures wall clock time, it captures the total time a function takes to execute, including any time spent waiting. This makes it invaluable for diagnosing responsiveness problems that the CPU Usage tool might miss.

Getting Started: Instrumenting Your Application

Ready to dive in and uncover your application's performance secrets? Here's a step-by-step guide to using the new Instrumentation Tool in Visual Studio 2022:

- Set Your Solution Configuration: In your Visual Studio project, ensure the solution configuration is set to

Release. Profiling in Release mode captures the most accurate data, as debug-specific overhead is removed. - Select Your Deployment Target: Choose your deployment target, which typically matches your project name for local deployment.

- Open the Performance Profiler: Press

Alt+F2(or navigate toDebug > Performance Profiler) to open the Performance Profiler window. - Select the Instrumentation Tool: In the profiler window, check the box next to "Instrumentation". If it's greyed out, ensure no other tools are selected, as some require exclusive use. Also, verify your project meets the tool's requirements (e.g., correct project type, Release mode).

- (Optional) Start with Collection Paused: If you want to control precisely when data collection begins, enable the "Start with collection paused" option. Data will only be collected once you press the "Record" button in the diagnostic session view.

- Start the Tool: Click the "Start" button to begin the profiling session.

- Target Your Instrumentation (for .NET): A dialog will appear, allowing you to select which projects or even specific functions within your solution you wish to instrument. This is a powerful feature for reducing overhead and focusing your analysis. If you're unsure, you can profile the entire solution initially, or use the CPU Usage tool first to get a general idea of hot spots, then switch to Instrumentation for a deeper dive into specific areas. For C++ projects, you can select items to instrument starting from Visual Studio 2022 version 17.13 Preview 1.

- Execute Your Scenario: Once the tool is running, interact with your application, performing the actions or scenario you intend to profile.

- Stop Collection: When you've completed your scenario, click "Stop collection" or simply close your application. Visual Studio will then process and display the profiling data.

Analysing the Instrumentation Report

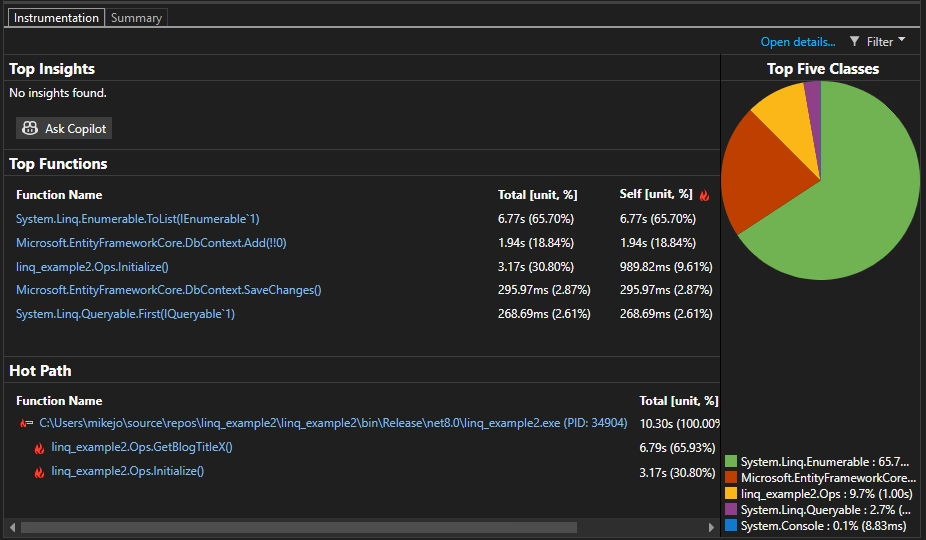

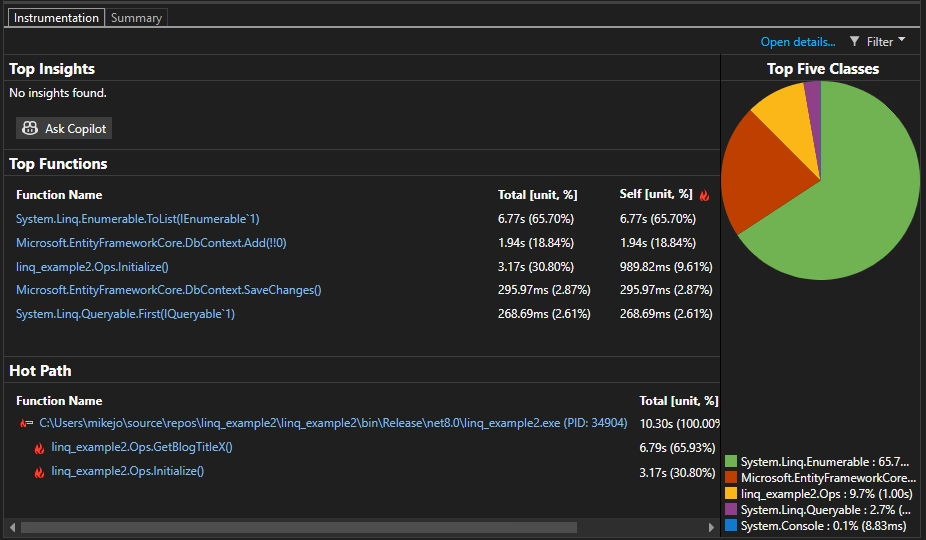

After the data collection is complete, Visual Studio presents a detailed report, offering various views to help you understand your application's performance characteristics. The initial summary page provides a high-level overview:

- Top Functions: This section lists functions ordered by the longest running time, with the most time-consuming function at the top.

- Hot Path: This highlights the call stack for the functions that are consuming the most wall clock time. These are often excellent starting points for investigation.

- Top Insights: If the profiler identifies any potential issues, they will be listed here with links to more information or suggestions (e.g., using Copilot if available).

To perform a deeper analysis, you'll want to explore the detailed report views. You can access these by clicking "Open details" on the summary page, or by clicking on a specific function in the "Top Functions" list. From the "Current View" dropdown, you can switch between different analytical perspectives:

| View Name | Description | Key Use |

|---|---|---|

| Caller/Callee | Detailed view of time spent in a specific function, its callers, and its callees. | Understanding function interactions and their cumulative impact. |

| Call Tree | Hierarchical view of the function call path. | Identifying the most expensive call paths (hot paths) and execution flow. |

| Modules | Time spent in individual modules, aggregated. | Pinpointing modules that are performance bottlenecks. |

| Functions | Time spent in individual functions, aggregated. | Identifying specific functions with high execution times or call counts. |

| Flame Graph | Hierarchical, visual representation of the function call path. | Quickly identifying hot paths and visualising where time is spent. |

In most views, the report is sorted by "Total [unit, %]" from highest to lowest. You can sort by other columns by clicking their headers. Double-clicking a function will often take you to its source code, highlighting where time is spent within that function. Key columns include:

- Total: The total wall clock time spent in the function and all functions it called. High "Total" values indicate functions that are major contributors to overall execution time.

- Self: The wall clock time spent exclusively within the function's own body, excluding time spent in functions it called. High "Self" values can point to inefficiencies directly within the function's implementation.

- Call Count: The exact number of times a function was invoked during the profiling session. This is invaluable for understanding execution frequency.

- Module: The name of the module containing the function.

Case Study: Mining for Performance Improvements

To illustrate the power of the Instrumentation Tool, let's consider a practical example. Imagine we have a console application, AnalyzerBench, designed to process a large diagnostics session file (diagsession) from the Performance Profiler, specifically one tracing 4.3 million .NET object allocations. Our goal is to improve its performance.

After running the Instrumentation Tool and selecting the DataWarehouse and DotNetAllocAnalyzer projects (as these are where the core analysis happens), we examine the results. Our initial focus is often the Flame Graph, which offers a compelling visual overview:

Looking at the Flame Graph, a prominent block immediately catches our eye: System.Threading.Monitor.Enter, consuming approximately 20% of the total execution time. This is a significant chunk! By right-clicking on this node and cross-referencing it in the Call Tree, we discover that its parent, ImportDataSource, is responsible for about 17% of the total time. The Call Tree view provides additional columns, including min, max, and average function times, thanks to the tool's exact call counts.

The Call Count for ImportDataSource shows three calls, and the average time spent waiting on a lock is around 5 seconds per call. This reveals a critical contention issue: the first call acquires the lock, while the subsequent two calls wait approximately 8 seconds each for the first data source to complete. This means two thread pool threads are synchronously blocked, unable to perform any work, potentially leading to thread pool starvation and a severely degraded user experience.

Proposed Solution: To address this, we could explore using parallel data structures that eliminate the need for a lock, or refactor the method to be asynchronous, utilising SemaphoreSlim.WaitAsync to prevent blocking thread pool threads. While these changes might be involved, the Instrumentation Tool has clearly highlighted a major performance bottleneck caused by thread contention.

Switching back to the Flame Graph, another area that stands out is List.Sort, which appears to consume another ~20% of our time. Again, cross-referencing this in the Call Tree reveals that List.Sort is being called over 24,000 times, cumulatively spending 20 seconds sorting data! This is a clear indication of inefficient sorting.

Upon investigating the code, we find that the application sorts allocations for each unique type. While sorting is necessary for quick filtering in the profiler's graph, many allocations are likely already sorted as they are written to the diagsession file sequentially. The sorting is only truly necessary if allocations arrive out of order due to multiple threads.

Refined Code: A simple yet effective optimisation involves tracking the sort state of the allocation lists. By adding a boolean flag, allocationsSorted, we can conditionally sort only when necessary:

internal void AddAllocation(AllocationObject allocationObject) { if (this.Allocations.Count > 0) { this.allocationsSorted &= allocationObject.AllocTimeNs >= this.Allocations[this.Allocations.Count - 1].AllocTimeNs; } this.Allocations.Add(allocationObject); } public void FinalizeData() { if (!this.allocationsSorted) { this.Allocations.Sort(TypeObject.comparer); this.allocationsSorted = true; } }After implementing this small change and re-running the Instrumentation Tool, we observe a dramatic improvement. The time spent in List.Sort is nearly eliminated. In this particular trace, all allocations were already sorted, so no sorting was needed, resulting in a 20-second reduction from a 110-second trace – a remarkable 20% performance improvement achieved in less than an hour of profiling and code adjustments!

Understanding Async Calls in the Instrumentation Call Tree (.NET)

For .NET developers, Visual Studio 2022 version 17.13 Preview 2 and later introduce an enhanced way to view asynchronous calls within the call tree. Views representing a visual call tree now nest .NET asynchronous calls directly under the functions where the asynchronous call was initiated. This creates a unified and more intuitive stack trace, making it significantly easier to follow the execution flow through asynchronous operations and identify bottlenecks within them.

Async nodes are clearly marked with an [Async Call] descriptor. You can toggle this "stitching" of asynchronous call stacks via the "Stitch Async Stacks" option in the profiler's Summary view filters, which is enabled by default. This feature greatly simplifies the analysis of modern asynchronous .NET applications.

Frequently Asked Questions (FAQs)

- Q: Why should I use the Instrumentation Tool over the CPU Usage Tool?

- A: The Instrumentation Tool provides exact wall clock times and call counts, making it ideal for identifying issues like thread contention, I/O waits, and network delays that the CPU Usage Tool (which focuses on CPU utilisation) might miss. It's best for precise bottleneck identification.

- Q: What does "wall clock time" mean in the context of profiling?

- A: Wall clock time refers to the actual elapsed time a function takes to execute, as measured by a regular clock. This includes time spent waiting for resources (like locks or I/O) in addition to CPU processing time. It's the real-world time your users experience.

- Q: Can I use the Instrumentation Tool for C++ projects?

- A: Yes, starting with Visual Studio 2022 version 17.6 Preview 2, the Instrumentation Tool supports C/C++. From version 17.13 Preview 1, you can also select specific items to instrument for C++.

- Q: How do I reduce the overhead of instrumentation?

- A: The new tool is already much faster. Further reduce overhead by targeting your instrumentation to specific projects or even individual functions, especially for .NET applications. Profiling in Release mode is also crucial.

- Q: What is the Flame Graph and how does it help?

- A: The Flame Graph is a visual, hierarchical representation of your call stack, where the width of each block indicates the time spent in that function and its children. It helps you quickly spot "hot paths" – the most time-consuming sequences of calls – at a glance, allowing for rapid identification of bottlenecks.

Conclusion

The revitalised Instrumentation Tool in Visual Studio 2022 is an incredibly powerful asset for any developer keen on optimising application performance. By providing precise timing, exact call counts, and a clear view of wall clock time, it illuminates bottlenecks that were previously difficult to diagnose, especially those related to contention and waiting. Features like enhanced .NET targeting, the intuitive Flame Graph, and improved error handling make it more accessible and effective than ever.

As demonstrated in our case study, a relatively small investment of time in profiling can yield significant performance improvements – a 20% boost in load performance in our example. So, next time your application feels sluggish, don't just guess; leverage the Instrumentation Tool. It will guide you directly to the heart of the problem, empowering you to create faster, more efficient, and ultimately, more satisfying software experiences for your users.

If you want to read more articles similar to Boost Performance with Visual Studio's New Instrumentation Tool, you can visit the Automotive category.