25/02/2008

In the dynamic realm of computer vision, Multi-Object Tracking (MOT) stands as a crucial yet challenging task. The ability to accurately detect, identify, and follow multiple moving objects within a video sequence is fundamental to a wide array of applications, from autonomous driving and robotics to surveillance and sports analytics. While numerous sophisticated algorithms have emerged over the years, the pursuit of efficiency, accuracy, and robustness continues to drive innovation. Enter FairMOT, a groundbreaking approach that streamlines the MOT process by building directly upon the strong foundations of CenterNet. While the individual components might not be entirely novel, FairMOT introduces significant advancements and novel insights that are poised to redefine the landscape of one-shot tracking.

Understanding FairMOT's Core Philosophy

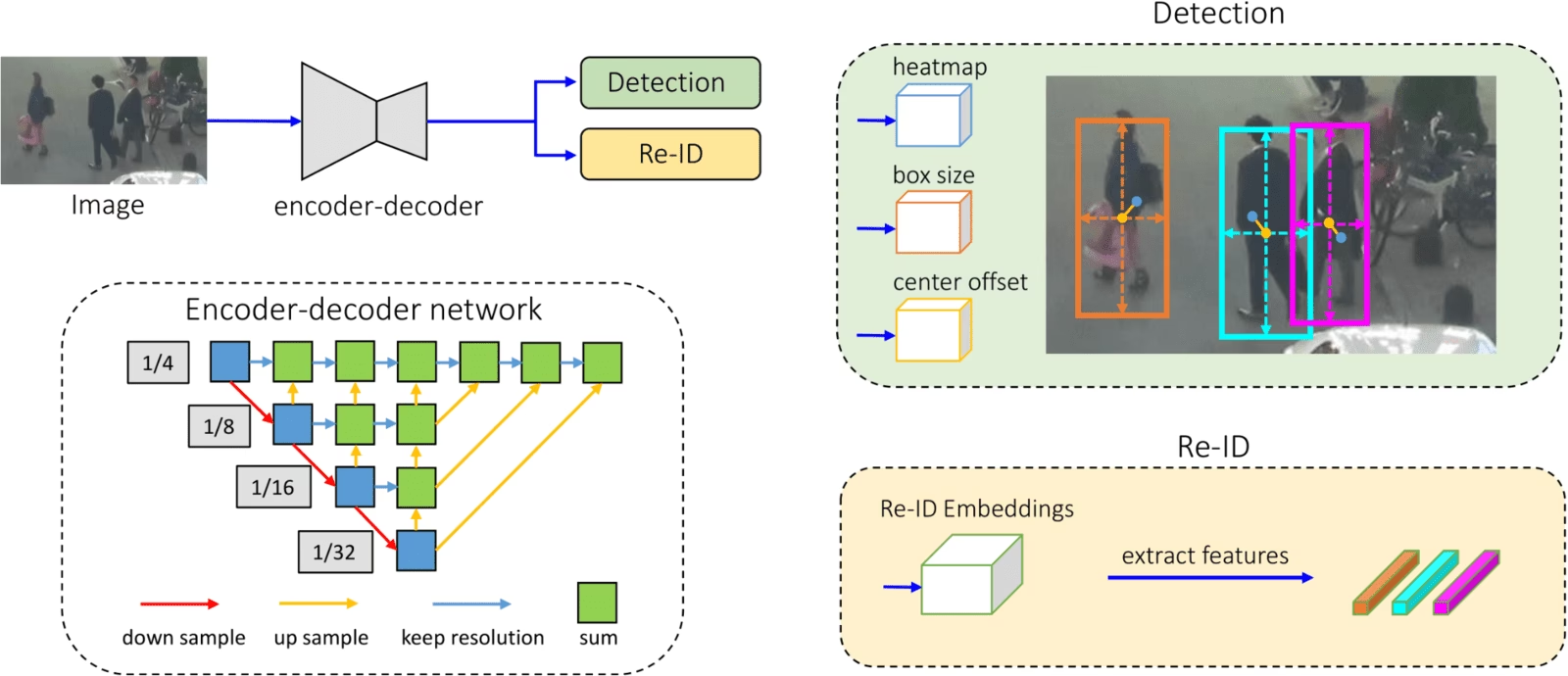

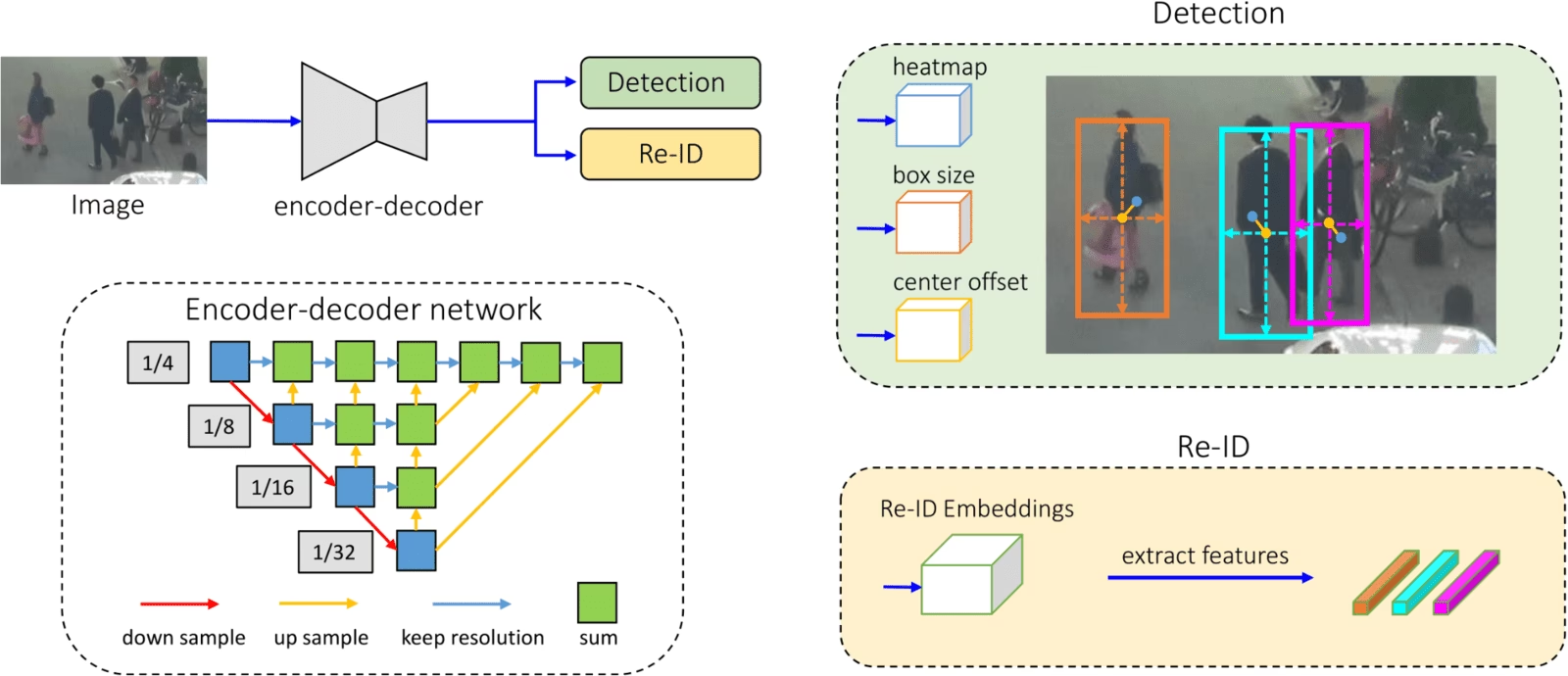

FairMOT distinguishes itself by adopting a unified, one-shot tracking strategy. Unlike traditional two-stage methods that often involve separate detection and re-identification (re-ID) pipelines, FairMOT integrates these functionalities into a single, cohesive framework. This integration is not merely a matter of convenience; it is a strategic decision aimed at fostering synergistic improvements between detection and re-ID. By jointly learning to detect objects and extract their unique appearance features, FairMOT can leverage the complementary information available in both tasks, leading to more accurate and robust tracking, especially in complex scenarios with occlusions and crowded environments.

The algorithm's architecture is a testament to its elegant design. At its heart lies CenterNet, a popular and highly effective anchor-free object detection framework. CenterNet excels at identifying objects by predicting their centers and then regressing their dimensions. FairMOT ingeniously extends this by simultaneously predicting object centers, their sizes, and crucially, their re-ID embeddings. This parallel processing of information is what enables its "one-shot" nature, meaning it performs detection and re-ID in a single forward pass.

Key Innovations and Discoveries

While building upon CenterNet, FairMOT introduces several novel discoveries that are particularly vital for effective MOT:

1. Joint Learning of Detection and Re-ID

The most significant contribution of FairMOT is its joint learning approach. By training the network to perform both object detection and re-identification simultaneously, the model learns to extract discriminative features that are beneficial for both tasks. This shared representation allows the detection module to be more aware of object appearances, leading to better handling of similar-looking objects and improved tracking consistency. Conversely, the re-ID module benefits from the accurate localization provided by the detection head, ensuring that appearance features are extracted from the correct object regions.

2. Improved Feature Fusion

FairMOT employs sophisticated feature fusion techniques to effectively combine information from different levels of the network. This allows the model to capture both high-level semantic information and low-level spatial details, which are crucial for accurate tracking in varying conditions. The fusion mechanism is designed to enhance the robustness of the re-ID embeddings, making them less susceptible to noise and variations in object appearance due to illumination changes or pose variations.

3. Handling of Occlusions and Identity Switches

A common pitfall in MOT is the tendency for trackers to switch identities when objects become occluded or when similar objects are in close proximity. FairMOT addresses this by leveraging the rich appearance information learned during the joint training process. The strong re-ID embeddings help to maintain object identities even during periods of temporary occlusion. Furthermore, the algorithm incorporates mechanisms to gracefully handle re-associations once objects re-emerge, minimizing identity switches.

Performance Benchmarks and Datasets

FairMOT has demonstrated state-of-the-art performance on several widely recognized MOT benchmarks, including:

- MOT16

- MOT17

- MOT20

These datasets present diverse challenges, encompassing varying camera viewpoints, object densities, and motion patterns. The success of FairMOT on these benchmarks underscores its generalizability and effectiveness across different scenarios.

Running Tracking with FairMOT

Getting FairMOT up and running is a straightforward process, thanks to its efficient implementation. The project typically provides pre-trained models that can be easily utilised for inference.

Light Version for Quick Deployment

For those seeking a balance between speed and accuracy, a light version of FairMOT is available. This version is optimised for faster inference while still delivering impressive results. To run tracking using the light version, you can typically execute a command similar to the following:

python track.py --config_path exps/mot/fairmot_half.yaml --eval mot17 --load_model [path_to_light_model.pth]After obtaining the tracking results in the specified format (often `.txt` files), these can be submitted to the MOT challenge evaluation server to obtain quantitative metrics such as MOTA (Multi-Object Tracking Accuracy), MOTP (Multi-Object Tracking Precision), IDF1, and more. The light version has been shown to achieve commendable scores, such as 68.5 MOTA on the MOT17 test set, making it suitable for applications where real-time performance is a priority.

Baseline Model for Enhanced Performance

For applications where maximum accuracy is paramount, FairMOT offers a more powerful baseline model. This model, often referred to by its checkpoint name like fairmot_dla34.pth, is trained with a more extensive configuration and potentially larger datasets, leading to superior performance. Using this baseline model can yield results exceeding 73+ MOTA on the MOT17 test set. The command to utilise this model would be analogous:

python track.py --config_path exps/mot/fairmot_dla34.yaml --eval mot17 --load_model [path_to_baseline_model.pth]The choice between the light and baseline versions depends on the specific requirements of your application. If real-time processing is critical, the light version might be sufficient. However, if achieving the highest possible tracking accuracy is the primary goal, investing in the baseline model is recommended.

Technical Details and Architecture

FairMOT's architecture is a clever adaptation of CenterNet. It typically employs a deep backbone network (e.g., DLA-34) for feature extraction. The output feature maps from the backbone are then fed into multiple heads:

- Center Heatmap Head: Predicts the likelihood of an object center at each spatial location.

- Object Size Head: Regresses the width and height of the bounding box for detected object centers.

- Re-ID Embedding Head: Extracts a compact, discriminative feature vector (embedding) for each detected object.

The loss function for training FairMOT is a multi-task loss that combines:

- Center Heatmap Loss: Typically a focal loss, penalising incorrect center predictions.

- Object Size Loss: Often an L1 loss on the bounding box dimensions.

- Re-ID Loss: A triplet loss or cross-entropy loss is commonly used to ensure that embeddings from the same object are close in the embedding space, while embeddings from different objects are far apart.

This comprehensive loss formulation guides the network to learn features that are simultaneously good for detection and re-identification.

Advantages of FairMOT

FairMOT offers several compelling advantages over traditional multi-stage MOT trackers:

- Efficiency: The one-shot nature significantly reduces computational overhead and inference time, making it suitable for real-time applications.

- Simplicity: By unifying detection and re-ID, the overall pipeline is simplified, reducing the complexity of implementation and deployment.

- Performance: The joint learning strategy leads to improved accuracy and robustness, particularly in challenging scenarios.

- End-to-End Trainability: The entire framework can be trained end-to-end, allowing for optimal learning of shared representations.

Frequently Asked Questions (FAQs)

Q1: What is MOTA and why is it important?

MOTA (Multi-Object Tracking Accuracy) is a primary metric used to evaluate the performance of MOT algorithms. It considers mismatches between ground truth and tracked objects, including false positives, false negatives, and identity switches. A higher MOTA score indicates better tracking performance.

Q2: Can FairMOT handle crowded scenes?

Yes, FairMOT is designed to perform well in crowded scenes. The joint learning of detection and re-ID, coupled with strong re-ID embeddings, helps to distinguish between similar-looking objects and maintain their identities even when they are closely packed.

Q3: What are the hardware requirements for running FairMOT?

While the light version can run on less powerful hardware, for optimal performance and faster inference, a GPU is highly recommended. The exact requirements will depend on the specific model variant and the resolution of the input video.

Q4: How does FairMOT compare to other one-shot trackers?

FairMOT stands out due to its novel discoveries and its strong integration with the CenterNet framework. It often achieves a superior balance between accuracy and speed compared to many other one-shot or even two-stage trackers, particularly on challenging datasets.

Q5: Where can I find the code and pre-trained models for FairMOT?

The official FairMOT repository, typically hosted on platforms like GitHub, is the best place to find the source code, detailed instructions, and links to pre-trained models for both the light and baseline versions.

Conclusion

FairMOT represents a significant stride forward in the field of Multi-Object Tracking. By elegantly integrating detection and re-identification within a unified, one-shot framework built upon CenterNet, it achieves an impressive balance of efficiency, simplicity, and accuracy. Its innovative approach to joint learning and feature fusion allows it to tackle complex tracking challenges effectively, making it a valuable tool for a wide range of computer vision applications. Whether you need a fast, real-time tracker or the highest possible accuracy, FairMOT offers a compelling solution, backed by strong performance on leading benchmarks and accessible implementation details.

If you want to read more articles similar to FairMOT: A Streamlined Approach to MOT, you can visit the Automotive category.