24/02/2011

In our increasingly digital world, algorithms are ubiquitous, influencing nearly every facet of our daily lives. From the search results you see to the recommendations you receive for films or products, and even the intricate systems that manage traffic flow, algorithms are silently at work. But what exactly is an algorithm? Where did this term originate, and how do these complex systems truly function? At its simplest, an algorithm is a meticulously defined series of instructions or rules designed to solve a particular problem or accomplish a specific task. Whether performing complex calculations, efficiently sorting vast datasets, or even guiding autonomous vehicles, algorithms are the fundamental bedrock upon which these advanced processes are built. Let us delve deeper into this fascinating subject to truly comprehend their profound impact.

- The Enduring Legacy of Algorithms: A Historical Journey

- Deconstructing the Algorithm: What Are They, Really?

- Algorithms at the Forefront of Artificial Intelligence

- Comparing AI Learning Paradigms

- The Transformative Impact of AI Algorithms Across Industries

- Frequently Asked Questions (FAQs) About Algorithms

- The Indispensable Core of Our Intelligent Future

The Enduring Legacy of Algorithms: A Historical Journey

The history of algorithms stretches back many centuries, long predating the digital age and the advent of modern computing. Their evolution reflects humanity's continuous quest to organise information, solve problems systematically, and automate repetitive tasks. Understanding this lineage provides crucial context for their indispensable role today.

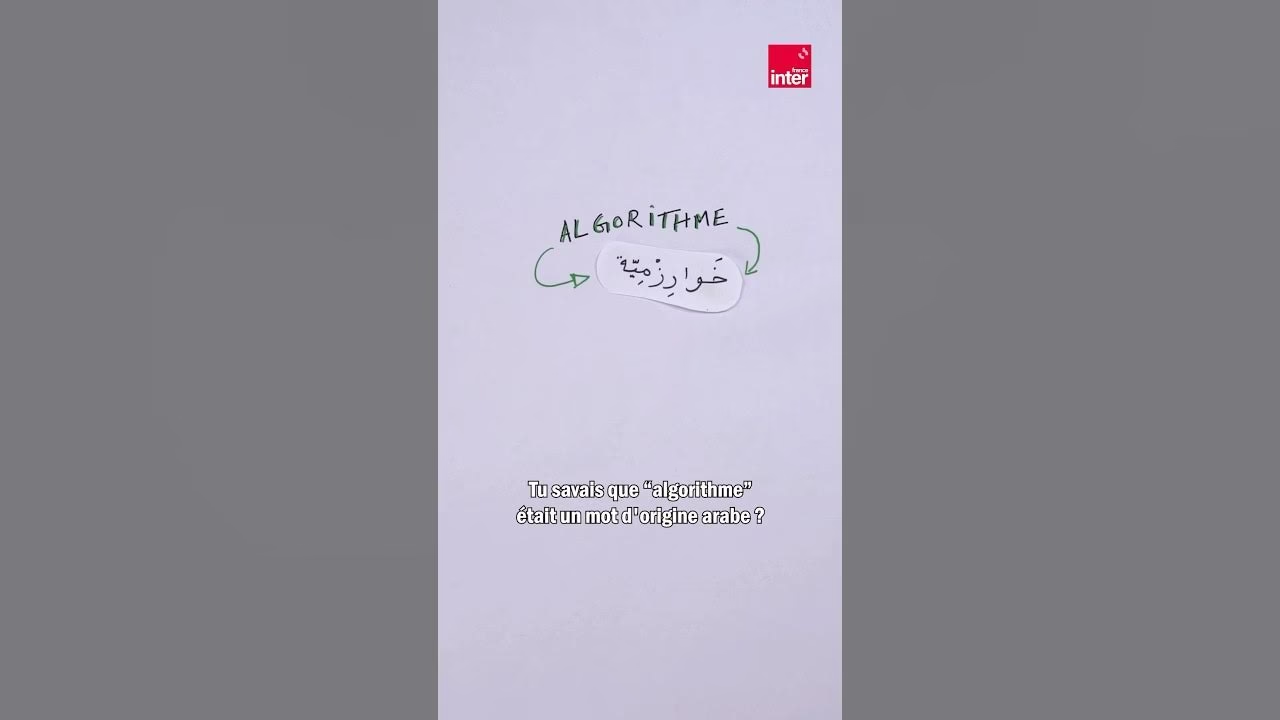

Ancient Origins: Al-Khwarizmi and Beyond

The term 'algorithm' itself is a testament to its ancient roots, deriving its name from the brilliant Persian mathematician, Muhammad ibn Musa al-Khwarizmi. Living in the 9th century, Al-Khwarizmi's groundbreaking works laid the foundations for modern algebra. His treatise, 'On the Calculation with Hindu Numerals', meticulously described the decimal positional numeral system and methods for performing arithmetic operations using these numbers. It was the Latinisation of his name, 'Algorismi', that eventually gave us the word 'algorithm', initially referring to the rules for performing arithmetic operations using Hindu-Arabic numerals. At this early stage, algorithms were primarily conceptual tools, used to solve straightforward mathematical problems, such as calculating areas, solving linear equations, or performing basic financial transactions.

However, the concept of a systematic procedure for problem-solving existed even before Al-Khwarizmi. Ancient civilisations devised step-by-step methods for various tasks, from building pyramids to astronomical calculations. Euclid's algorithm, for instance, described in his 'Elements' around 300 BC, provides a method for computing the greatest common divisor of two numbers, demonstrating the timeless nature of algorithmic thinking.

The Dawn of the Digital Age: Algorithms Meet Computers

It was not until the 20th century, with the revolutionary advent of electronic computers, that the significance and potential of algorithms truly exploded. The early pioneers of computing quickly realised that for these nascent machines to perform any task, they would require precise, unambiguous sequences of instructions. This necessity made algorithms absolutely indispensable to the burgeoning field of computer programming. Without a clear set of steps, a computer, being a purely logical machine, could not function.

From the 1930s onwards, visionary minds such as Alan Turing and John von Neumann laid much of the theoretical groundwork. Turing's concept of the 'Turing machine' provided a formal model of computation and what could be computed, effectively defining the limits of algorithms. Following this, from the 1950s, pioneers like John McCarthy began to explore the ambitious idea of artificial intelligence, laying the groundwork for creating systems capable of simulating human-like cognitive abilities. As computer hardware advanced, so too did the complexity and sophistication of the algorithms developed to run on them.

Over the subsequent decades, algorithms diversified and grew in complexity, giving rise to specialised domains such as machine learning and deep learning, which have become central pillars of modern AI. This progression has transformed algorithms from abstract mathematical concepts into practical tools that power our digital world.

Deconstructing the Algorithm: What Are They, Really?

To reiterate and elaborate, an algorithm is a finite set of well-defined, unambiguous instructions or rules designed to solve a problem or accomplish a specific task. In the realm of computing, and particularly within artificial intelligence, algorithms are absolutely essential because they enable machines to process vast quantities of data, learn from experience, and make informed decisions. Think of an algorithm as a highly detailed recipe: it specifies every ingredient and every step in a precise order to achieve a desired outcome. The key characteristic of an algorithm is its precision; every step must be clear and executable, leaving no room for ambiguity.

Regardless of their complexity, all algorithms share several core properties:

- Input: They take zero or more inputs.

- Output: They produce one or more outputs.

- Definiteness: Each step must be clear and unambiguous.

- Finiteness: They must terminate after a finite number of steps.

- Effectiveness: Each step must be sufficiently basic that it can be carried out by a person using only paper and pencil.

Algorithms at the Forefront of Artificial Intelligence

In the rapidly expanding field of artificial intelligence, algorithms play an absolutely crucial role. They are the brains behind the brawn, allowing machines not only to process data at incredible speeds but also to learn, adapt, and even reason in varied and often unpredictable situations. Without sophisticated algorithms, AI would remain a theoretical concept, unable to translate data into actionable insights or intelligent behaviours.

Algorithms in AI can broadly be classified into several primary categories, each with unique applications and distinct methodologies:

Supervised Learning: Learning from Labelled Data

This type of algorithm requires 'labelled' data, meaning examples where the input data is paired with its corresponding correct output. The algorithm 'learns' from these meticulously prepared examples to make accurate predictions on new, unseen data. Imagine teaching a child to identify different animals by showing them pictures of cats labelled 'cat' and dogs labelled 'dog'. Over time, the child learns to correctly classify a new animal picture. Similarly, a supervised learning algorithm for image recognition might be trained with thousands of images of cats and dogs, each correctly identified. Once trained, it can then accurately perform classification on an unknown image based on what it has learned. Common applications include spam detection (classifying emails as spam or not), medical diagnosis (predicting disease based on symptoms and test results), and credit scoring (predicting creditworthiness).

In stark contrast to its supervised counterpart, unsupervised learning does not rely on labelled data. Here, the algorithm explores datasets to identify underlying structures, patterns, or relationships without any prior knowledge of what those patterns might be. There's no 'right' answer provided. A classic example is clustering, where similar objects are grouped together without needing to know their categories beforehand. For instance, an algorithm might analyse customer purchasing behaviour and group customers into different segments based on their shared buying habits, without being told what those segments are. Other applications include anomaly detection (identifying unusual patterns that might indicate fraud or system failures) and data compression.

Reinforcement Learning: Learning Through Interaction

In this dynamic framework, an 'agent' learns to make decisions by interacting with an environment. The agent receives rewards or penalties based on the success or failure of its actions, which incentivises it to improve its performance over time. It's akin to training a pet through positive reinforcement. The agent explores various actions, observes the consequences, and gradually learns which actions lead to the highest cumulative reward. This approach is extensively used in applications like training AI to play complex video games (e.g., AlphaGo), robotics (teaching robots to navigate and perform tasks), and complex system optimisation, such as traffic light control or resource management in data centres. It's particularly powerful when direct human supervision is impractical or impossible.

Comparing AI Learning Paradigms

To further clarify the distinctions between these fundamental AI algorithm types, consider the following comparison:

| Feature | Supervised Learning | Unsupervised Learning | Reinforcement Learning |

|---|---|---|---|

| Data Type | Labelled data (input-output pairs) | Unlabelled data | Environment, states, actions, rewards |

| Primary Goal | Prediction, Classification, Regression | Pattern Discovery, Clustering, Dimensionality Reduction | Decision Making, Maximising Cumulative Reward |

| Learning Process | Learns a mapping from input to output based on examples | Identifies inherent structures or groupings in data | Learns optimal actions through trial and error and feedback |

| Common Tasks | Spam detection, Image recognition, Credit scoring | Customer segmentation, Anomaly detection, Recommendation systems | Game playing, Robotics, Autonomous navigation |

| Human Intervention | High (for data labelling) | Low to moderate (for interpreting patterns) | Moderate (for setting up environment and reward system) |

The Transformative Impact of AI Algorithms Across Industries

AI algorithms are not merely academic curiosities; they are foundational not only for the ongoing development of intelligent systems but also for the profound transformation of entire industries. From revolutionising healthcare and finance to optimising transport and logistics, these algorithms enable the analysis of massive volumes of data, the optimisation of intricate processes, and the making of highly informed decisions. Their ability to process information at speeds and scales far beyond human capability drives unprecedented efficiency and innovation.

Healthcare Revolutionised

In the healthcare sector, machine learning algorithms are proving invaluable. They can assist in diagnosing diseases from medical images (such as X-rays, MRIs, and CT scans) with a precision that often surpasses that of human doctors, sometimes even detecting subtle indicators missed by the human eye. Beyond diagnosis, AI algorithms are used for predicting disease outbreaks, personalising treatment plans based on a patient's genetic makeup, accelerating drug discovery by simulating molecular interactions, and even optimising hospital bed allocation to improve patient flow.

Financial Insights and Security

The financial world has been significantly reshaped by algorithms. They are critical for sophisticated fraud detection systems, identifying suspicious transactions in real-time by analysing patterns that deviate from normal behaviour. Algorithmic trading, where computer programs execute trades at high speeds based on complex market analysis, is now standard practice in global markets. Furthermore, algorithms aid in credit risk assessment, helping banks and lenders make more accurate decisions about loan eligibility, and in personalised financial advice, tailoring investment strategies to individual client profiles.

Smart Transportation and Logistics

The transport sector is undergoing a massive shift thanks to algorithms. Self-driving cars, a pinnacle of AI engineering, rely on complex algorithms for perception (understanding their surroundings), decision-making (planning routes and reacting to obstacles), and control (executing movements). Beyond autonomous vehicles, algorithms are optimising logistics and supply chains, finding the most efficient delivery routes, managing warehouse inventories, and predicting demand fluctuations. This leads to reduced fuel consumption, faster deliveries, and overall greater autonomy and efficiency in moving goods and people.

Frequently Asked Questions (FAQs) About Algorithms

- What is the simplest way to understand an algorithm?

- Think of an algorithm as a very detailed recipe or a set of instructions for building a flat-pack furniture item. It tells you exactly what steps to take, in what order, to achieve a specific outcome. If you follow the instructions precisely, you'll always get the same result.

- Are algorithms always complex?

- Not at all! A simple algorithm could be a set of instructions for making a cup of tea (boil water, add teabag, pour water, add milk/sugar). While many modern algorithms, especially in AI, are incredibly complex, the core concept applies to any step-by-step process designed to solve a problem.

- How do algorithms learn?

- In machine learning, algorithms 'learn' by identifying patterns and relationships in data. For example, in supervised learning, they learn from examples where the correct answer is provided. In reinforcement learning, they learn by trial and error, getting 'rewards' for correct actions and 'penalties' for incorrect ones, gradually optimising their behaviour.

- Can algorithms be biased?

- Yes, absolutely. Algorithms learn from the data they are fed. If that data contains historical biases (e.g., reflecting societal inequalities), the algorithm can inadvertently learn and perpetuate those biases in its decisions. This is a significant ethical concern in AI development, and researchers are actively working on methods to detect and mitigate algorithmic bias.

- What is the future of algorithms?

- The future of algorithms is one of increasing sophistication and integration into every aspect of life. We can expect more advanced AI algorithms that can understand context better, reason more like humans, and perform complex tasks with even greater autonomy. They will continue to drive innovation in fields like personalised medicine, climate modelling, and the development of truly intelligent robotic systems, making our world more interconnected and efficient.

The Indispensable Core of Our Intelligent Future

Algorithms are undeniably the cornerstone of artificial intelligence, enabling machines to become progressively smarter, more adaptive, and increasingly autonomous. They are the silent architects of our digital landscape, processing the vast streams of data that define modern existence. By continuing to refine, develop, and innovate these algorithms, we are not just building better technology; we are paving the way for unprecedented innovations that have the potential to transform our society in profound and previously unimaginable ways. Understanding these fundamental mechanisms is not merely for computer scientists; it is essential for anyone interested in the future of technology and its ever-growing impact on our daily lives. As algorithms continue to evolve, so too will our capabilities as a civilisation, shaping a future driven by intelligent systems.

If you want to read more articles similar to Understanding Algorithms: From Ancient Roots to AI, you can visit the Automotive category.